We put excellence, value and quality above all - and it shows

A Technology Partnership That Goes Beyond Code

“Arbisoft has been my most trusted technology partner for now over 15 years. Arbisoft has very unique methods of recruiting and training, and the results demonstrate that. They have great teams, great positive attitudes and great communication.”

What OpenAI ChatGPT 5.5 and the Layoff Surge Teach Us About the State of Tech in 2026

Artificial intelligence, or AI, is now moving faster than many companies can absorb. OpenAI released GPT-5.5 in April 2026, followed by the GPT-5.5 Instant release as the new default ChatGPT model in early May. At nearly the same time, the technology sector entered another layoff wave, with tens of thousands of jobs cut in the first quarter alone.

It is tempting to connect those signals directly. Faster models, fewer workers. But that is too simple.

The clearer reading is that tech is entering a more demanding operating phase. AI capability is improving. Workforce restructuring is continuing. Yet the link between the two is uneven, indirect, and shaped by economics, leadership choices, and organizational maturity.

The 2026 Tech Story Is Not Just Faster AI or More Layoffs

The state of tech in 2026 is not defined by one model release or one layoff statistic. It is defined by the gap between AI adoption and AI maturity.

The research points in two directions at once. On one hand, frontier models are improving quickly. GPT-5.5 is reported to show gains in reasoning, coding, multimodal performance, and context handling. GPT-5.5 Instant matters because it pushes those capabilities into everyday workflows faster.

On the other hand, layoff evidence remains noisy. Tech layoffs in 2026 are substantial, but multiple labor analyses suggest that AI is not yet causing broad employment disruption at the economy-wide level. Oxford Economics found that only a small share of 2025 U.S. job cuts were officially attributed to AI. Other research found no clear aggregate link between AI exposure and unemployment.

That does not make AI irrelevant. It means leaders should avoid treating layoffs as automatic proof of AI-driven displacement. The better question is where AI is changing tasks, team structures, and investment priorities.

What the GPT-5.5 Instant Release Actually Needs to Prove

GPT-5.5 Instant should not be judged only by benchmark gains. For chief technology officers, chief information officers, founders, and people leaders, the real test is operational.

A model release matters when it changes speed, cost, reliability, reasoning quality, workflow integration, or governance readiness. OpenAI reported meaningful benchmark gains for GPT-5.5 across math, multimodal reasoning, and coding tasks. It also positioned the release around agentic coding, knowledge work, data analysis, and multi-step workflows.

Those are important signals. But they do not automatically translate into enterprise value. A stronger model becomes useful only when it can be evaluated, secured, integrated, monitored, and trusted inside real workflows.

Capability Gains Are Only Useful When They Survive Enterprise Constraints

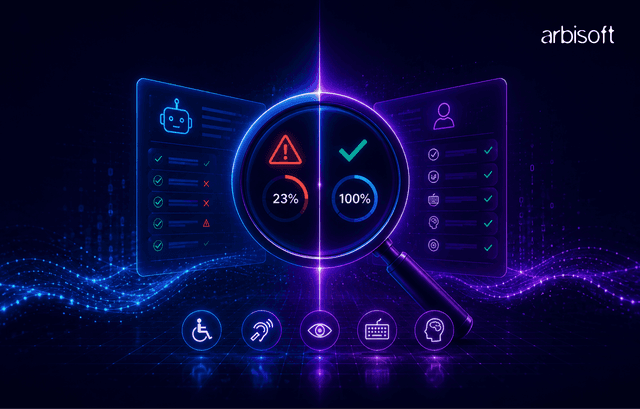

Enterprise AI adoption is now less about access and more about execution. Research cited in the report found that most organizations use AI in at least one function, but only a tiny share describe their AI deployments as fully mature.

That gap matters. Security, privacy, data quality, latency, cost, compliance, and workflow fit can weaken even strong model performance. A model may perform well in benchmarks while still failing a company’s internal evaluation.

Before deploying GPT-5.5 or similar systems at scale, leaders should ask for practical artifacts: system cards, model cards, data processing terms, audit logs, evaluation results, security documentation, and acceptable-use controls. These are not administrative extras. They are the difference between experimentation and operational AI governance.

Faster AI Does Not Automatically Mean Fewer Workers

AI automation means using AI to reduce manual effort in specific workflows. It is not the same as job elimination.

The research shows that many jobs are exposed to AI because parts of those jobs contain automatable tasks. But task exposure is not the same as role replacement. A software engineer may use AI to draft tests or documentation, while still owning architecture, review quality, security, and delivery judgment.

AI-assisted work can increase output without reducing headcount. It can also change what companies expect from each role. The outcome depends on whether demand grows, whether bottlenecks shift, and whether leaders choose to reinvest productivity gains or cut staff.

This is why workforce decisions should start with workflow evidence, not model capability alone.

What the Layoff Surge Can and Cannot Tell Us About AI Automation

The layoff surge is real. The research report cites more than 73,000 tech jobs cut across 95 companies in the first quarter of 2026. Large technology companies have announced major reductions, and some have referenced AI, automation, or productivity in their workforce narratives.

Still, layoffs are a weak signal when read in isolation. They can reflect pandemic over-hiring, high interest rates, margin pressure, investor expectations, strategic pivots, or role-mix changes. AI can be part of that story without being the only driver.

A stronger claim requires stronger evidence. Leaders should look for proof that AI systems are in production for the functions being reduced, that productivity was measured before cuts, and that hiring patterns shifted toward AI, data, infrastructure, and governance roles.

Company Explanations Are Evidence, but Not the Whole Story

Executive statements are useful, but they are not complete evidence. A company may frame layoffs as AI-driven because that sounds strategic to investors. Another may cut roles for financial reasons while expanding AI infrastructure spending.

The right approach is triangulation. Compare what the company says with what it does.

Look at the roles eliminated. Look at the roles still being hired. Review AI investment, tooling adoption, productivity metrics, and organizational redesign. If a company is cutting broad operational layers while hiring AI engineers, data engineers, security specialists, and cloud architects, that suggests role-mix change. If AI investment is vague, the AI explanation deserves more skepticism.

The Most Exposed Work Is Task-Based

The most useful unit of analysis is the task, not the job title. Research on generative AI exposure shows that many roles include tasks AI can assist. In technology companies, exposed tasks include boilerplate code generation, documentation, test case drafting, support triage, reporting, data cleaning, workflow coordination, and content operations.

Less exposed work tends to involve architecture, security judgment, stakeholder alignment, system ownership, incident response, regulatory interpretation, mentorship, and domain-specific accountability.

This distinction matters for workforce planning. A role exposure map should show which tasks can be automated, which can be augmented, and which must remain human-led. Without that map, AI-driven layoffs become guesswork.

How AI Is Changing the Tech Workforce in 2026

AI is changing the tech workforce through role redesign more than mass replacement. Teams are expected to deliver more with leaner structures. Junior pathways are shifting. AI, data, cloud, and governance roles remain in demand.

The research points to a split market. Some companies are cutting headcount while hiring for AI infrastructure and operating discipline. Others are experimenting with AI but have not scaled it into production. Many are somewhere between adoption and maturity.

This is the real AI workforce impact in 2026: not a single collapse in demand, but a reallocation of work, skills, and accountability.

Software Teams Are Being Redesigned Around Leverage

Software teams are moving from AI as a coding helper to AI as a first-pass executor across parts of the software development lifecycle. AI can draft code, tests, documentation, and review suggestions. Engineers still need to verify correctness, security, architecture, and maintainability.

Productivity evidence is meaningful. Studies cited in the report show faster task completion and more pull requests in AI-assisted environments. But throughput alone is not enough. Faster code drafts only improve delivery if review, continuous integration, testing, and quality assurance keep pace.

The mature software organization will not ask, “How many developers can this replace?” It will ask, “Where does AI increase leverage without weakening code quality, security, or ownership?”

Data and AI Teams Are Moving From Experimentation to Operating Discipline

Production AI depends on data discipline. Strong models still need clean data, retrieval systems, evaluation protocols, monitoring, lineage, and governance.

This is why data engineering, model evaluation, machine learning operations, and AI governance are becoming more important. Pilots can tolerate manual checks and informal ownership. Production systems cannot.

A mature AI operating model needs an AI use-case inventory, model risk register, evaluation framework, monitoring dashboards, data governance policies, and incident response process. Without these, companies may appear AI-active while remaining operationally immature.

People Leaders Now Need a Role Exposure Map, Not a Generic AI Policy

A generic AI policy is not enough. It may say what employees can or cannot do with tools, but it does not explain how work will change.

People leaders need a role exposure map. That map should connect tasks, tools, risks, skills, and workforce decisions. It should identify which tasks are automatable, which are augmentable, which roles need reskilling, and where capability or morale risks may appear.

This is also a trust issue. Employees can usually tell when leaders are using AI language to justify decisions already made. Clear role-level analysis creates a better foundation for communication, reskilling, and workforce redesign.

The Leadership Lesson: Productivity Without Capability Loss

The healthiest technology organizations will use AI to raise leverage without damaging the capabilities that make AI useful.

Productivity is valuable. AI can help teams draft faster, analyze more data, reduce repetitive work, and expand delivery capacity. But capability loss is costly. Companies that cut too deeply can lose domain knowledge, system ownership, mentoring capacity, and the judgment needed to supervise AI output.

The leadership challenge is not choosing between people and AI. It is designing an operating model where AI improves productivity while human expertise protects quality, trust, and resilience.

The Risk of Cutting Too Deeply Is Losing the People Who Make AI Useful

AI systems need people who understand the business, the architecture, the data, and the risks. Senior engineers, domain experts, data engineers, security teams, governance leads, and product owners are often the people who make AI work in production.

These roles are expensive, so they may look attractive in cost-cutting exercises. But removing them can weaken the organization’s ability to evaluate, integrate, and govern AI.

Before cutting, leaders should identify critical knowledge holders, fragile systems, undocumented dependencies, incident response patterns, and mentorship gaps. If the people removed are the same people needed to make AI reliable, the organization is not becoming more efficient. It is creating future execution risk.

The Risk of Moving Too Slowly Is Creating an Execution Gap

Caution has value, but inertia carries its own risk. The research report cites widespread enterprise AI use, often ahead of governance. Shadow AI grows when employees bring tools into work without approval, measurement, or oversight.

That creates a different problem. The company still carries security, privacy, quality, and compliance risks, but without structured benefits.

Signs of an execution gap include long-running pilots with no production decision, no workflow-level measurement, fragmented tooling, no governance owner, generic training, and no role exposure analysis. Leaders do not need to chase every model release. But they do need an operating rhythm for evaluating and governing AI adoption.

A Practical Reading of the Market for CTOs, CIOs, Founders, and People Leaders

The 2026 market should be read through three lenses: task exposure, capability risk, and governance readiness.

| Lens | Core Question | What to Verify |

| Task Exposure | Which tasks can AI assist or automate? | Workflow maps, tool usage, productivity baselines |

| Capability Risk | What expertise must be preserved? | System ownership, domain knowledge, incident dependencies |

| Governance Readiness | Can AI be deployed safely at scale? | Evaluation protocols, audit logs, policy ownership |

This framework helps leaders avoid two mistakes. The first is assuming every layoff is AI-driven. The second is assuming AI has no workforce impact because aggregate employment effects remain limited.

The more useful view is that AI is reshaping work before it fully reshapes job counts.

Questions to Ask Before Attributing Layoffs to AI

A practical diagnostic can keep leaders grounded:

- Which roles were affected, and which roles are still being hired?

- Is there a specific AI system in production for the eliminated work?

- Was productivity measured before the reduction?

- Did the company over-hire during 2020 to 2022?

- Are cuts concentrated in automatable workflows or spread broadly?

- Is AI investment rising alongside the cuts?

- Are leaders showing workflow evidence or only using AI language?

- What capability may be lost if these people leave?

If the answers are unclear, AI may still be part of the story. But the causal claim should stay cautious.

Signals That an AI Workforce Strategy Is Becoming Mature

A mature AI workforce strategy is specific, measured, and governed. It connects tools to workflows and workflows to roles.

Look for an AI use-case inventory, model evaluation standards, risk registers, training tied to actual tools, transparent workforce communication, and task-level role exposure maps. Mature organizations also define human accountability for AI-assisted decisions that affect customers, employees, or critical systems.

Immature organizations show the opposite pattern: tool sprawl, unclear ownership, unsupported productivity claims, opaque layoff narratives, and no shared view of which roles are changing.

What This Means for the State of Tech in 2026

The state of tech in 2026 is not simply that AI is replacing workers. It is that AI is becoming operational infrastructure faster than many organizations can redesign work around it.

GPT-5.5 and the GPT-5.5 Instant release show continued model progress. The layoff surge shows continued pressure on technology companies to operate with more discipline. The connection between them is real, but not simple.

For leaders, the best next step is not a broad AI headcount assumption. It is a structured review of task exposure, governance readiness, and capability risk. That review should inform hiring, reskilling, automation, and communication.

The organizations most likely to navigate 2026 well will not be the ones that cut fastest or adopt loudest. They will be the ones that understand where AI creates leverage, where human judgment remains essential, and where workforce decisions need evidence before narrative.