We put excellence, value and quality above all - and it shows

A Technology Partnership That Goes Beyond Code

“Arbisoft has been my most trusted technology partner for now over 15 years. Arbisoft has very unique methods of recruiting and training, and the results demonstrate that. They have great teams, great positive attitudes and great communication.”

How to Implement AI to Modernize Your Systems

TL;DR: If you read nothing else

- AI hasn't made modernization easy. It has made it economically possible. The discovery and documentation phase that used to eat 40 to 60% of a legacy project's budget is now where AI earns its keep.

- Pick a path before picking a tool. The 7 R's (Rehost, Replatform, Refactor, Rearchitect, Rebuild, Replace, Encapsulate) still apply. AI just changes which one is viable for a given situation.

- Costs in 2026 range from $25K for a contained SMB pilot to $600M+ for a core-banking overhaul. McKinsey documented one bank modernizing 400 pieces of software at that scale using agent squads.

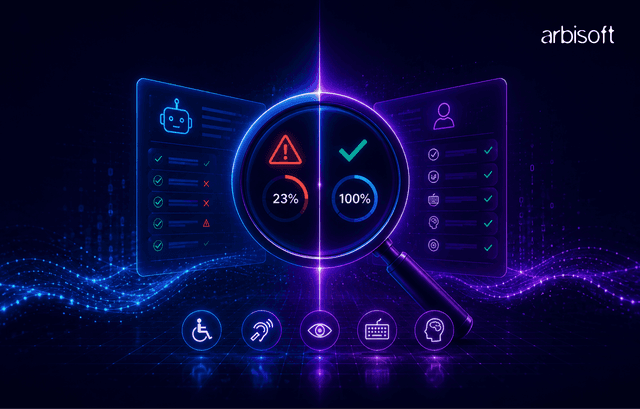

- The biggest risk is bad governance. Prompt injection, unfiltered retrieval, and missing audit trails cause more production incidents than hallucinations do.

- Start with one workflow, ship a thin vertical slice in 90 days, then earn the right to scale. 90% of vertical AI use cases are still stuck in pilot (McKinsey). Don't join them.

Why this is suddenly urgent in 2026

Legacy modernization has been on every CIO's "next year" list for ten years running. Then something shifted.

Recently, Anthropic announced that its Claude AI can now automate the exploration and analysis of legacy COBOL code, a capability that sent IBM stock plunging more than 13% in a single trading session. Markets noticed. So did CFOs. The discovery phase of a modernization project, the bit that used to require six months and a team of consultants who remembered what JCL stood for, is suddenly a thing a model can do over a weekend.

That's the headline. The reality underneath it is messier.

70% of Fortune 500 companies still operate software that's more than two decades old, according to McKinsey, yet the modernization market itself has already hit $24.98 billion in 2025 and is projected to reach $56.87 billion by 2030. Research from McKinsey indicates that 68% of enterprises still depend on legacy systems for core functions, yet only 22% have a clear modernization roadmap. Gartner estimates 70% of full rewrites exceed budgets or timelines.

Here's the honest version. AI didn't make modernization easy. It made it economically possible for more companies, for more workloads, with less of the brutal discovery work that used to kill projects before they started.

There's a catch, though. AI introduces its own failure modes. Prompt injection. Retrieval leaks. Hallucinated business logic. Audit trails that don't hold up when the compliance officer asks who approved what.

This guide covers the decision framework, the 8-step implementation process, the tools that actually work on legacy code in 2026, real cost ranges, and the governance tripwires most teams miss until it's too late.

What "AI modernization" actually means (and what it doesn't)

AI-powered system modernization is the use of AI tools, including LLMs, coding copilots, retrieval systems, and increasingly agents, to accelerate the work of moving legacy applications toward modern, cloud-ready architectures. It is replacement done carefully, not rip-and-replace. In most 2026 projects, AI handles code analysis, documentation, translation, test generation, and migration scaffolding, while humans handle architecture decisions, business-rule validation, and anything that touches compliance.

The distinction that matters: AI changes the economics of the phases that used to be expensive. The phases that were already hard remain hard. Business-rule reconstruction got cheaper. Testing got cheaper. Making good architecture decisions is still a judgment call, and it is still the thing that will sink a project when done poorly.

How this differs from digital transformation

Digital transformation is the whole program: customer experience, new revenue streams, cultural change, new operating models. System modernization is one slice of that program, the technical foundation. A company can modernize without transforming (Capital One lift-and-shifted to AWS years before calling itself "digital"). Meaningful transformation, though, requires modernization, because legacy systems will eventually cap every ambition an organization has.

The 2026 business case: why waiting another year doesn't work anymore

Three things changed in the last 18 months that make this different from the "modernize or die" sermon CIOs have been hearing since 2017.

The talent math broke. As of 2025, around 47% of organizations cannot fill their open COBOL developer roles. Salaries for COBOL engineers have been rising quickly as a result, and industry projections suggest that by 2027, the vast majority of remaining COBOL developers will have retired. That isn't a talent shortage to manage. That's a cliff. And 95% of US ATM transactions along with 43% of global banking systems still run on the language the cliff is taking with it.

AI changed the cost curve for the expensive parts. Thoughtworks (2026) put it plainly: "Modernisation is rarely about preserving the past in a new syntax. It's about aligning systems with current market demands". AI handles the translation. Humans handle the alignment. The ratio of consultant hours to engineer hours just inverted.

Regulators stopped being patient. Gartner predicts that by the end of 2026, "death by AI" legal claims will exceed 2,000 due to insufficient AI risk guardrails. EU AI Act enforcement is ramping. SEC cybersecurity disclosure rules are live. When a modernized system uses AI anywhere near customer decisions, "governance later" stops being a defensible position.

Here's the stat that should land in any CFO's inbox: industry data from Gartner and Deloitte consistently shows enterprises allocating 60 to 80% of their IT budget to maintaining existing systems. Every year of delay grows that number. Every year of delay shrinks the talent available to do the work.

Is the organization actually ready? A realistic self-assessment

A valid answer to "should we modernize with AI?" is "not yet." Two companies can spend north of $400K on pilots that fail for the same reason: they lack the foundations to support the thing they are trying to build.

Before green-lighting a budget, run the situation through this list. When more than three items return "no," the first project isn't AI modernization. It's building the foundation that makes AI modernization possible.

Technical readiness

- Can the team access legacy code and data without a ticket that takes three weeks to approve?

- Does at least one integration surface exist (APIs, message queues, database replicas) that AI tooling can route through safely?

- Is there a non-production environment that mirrors production closely enough to test against?

- Is the data classified? Does the team know which fields are PII, PHI, or regulated?

- Can role-based access be enforced at the data layer?

Organizational readiness

- Is there a named owner for this program with authority to say no?

- Have security and privacy been invited in from day one?

- Is there a baseline measurement (current cycle time, error rate, cost) that improvement can be proven against?

- Can the team handle a pilot that doesn't work without political fallout?

- Is the organization prepared to pay for governance tooling (evaluation, observability, guardrails) that won't produce a single user-visible feature?

That last one stings for a reason. It's the one most programs skip. It's also the one that separates a proof-of-concept deck from a production system three years later.

The decision framework: choose a modernization path before picking a tool

The 7 R's of application modernization have been around for over a decade. They still apply in the age of AI. What changed is which R is economically viable for a given situation. Refactoring used to require months of human code archaeology. Now AI can do the archaeology, so Refactor is available to projects that would have forced a Rebuild two years ago.

Here's a quick reference for what each option means:

The R | What it means | When it fits |

| Encapsulate | Wrap legacy functionality in APIs without touching the inside. | New capabilities are needed around a stable core. Netflix did this before their microservices migration. |

| Rehost (lift-and-shift) | Move the application as-is to new infrastructure, usually cloud. | Hardware end-of-life pressure with no appetite for code changes. Quick payback, modest long-term value. |

| Replatform | Move to a new runtime or container with minimal code change. | Cloud-native operational benefits are wanted without a rewrite. Sweet spot for many mid-market projects. |

| Refactor | Restructure the code without changing its behavior. | Technical debt is strangling the system, but the logic is still correct. AI has made this the fastest-growing R. |

| Rearchitect | Redesign the architecture, typically monolith to microservices. | The original design has been outgrown. Requires strong engineering maturity. |

| Rebuild | Rewrite from scratch, preserving specs. | The code is genuinely unsalvageable. Expensive and risky; Gartner's 70% budget-overrun stat mostly lives here. |

| Replace | Throw it out, buy or build something new. | A better commercial option exists and switching cost is bearable. |

Most real projects end up using two or three R's together. A single bank might Encapsulate its mainframe core, Replatform its middleware, and Refactor the customer-facing apps all at once.

How to choose: a decision matrix

There's no algorithm that spits out the right R. These are the signals that narrow the field fast.

If this is true... | Lean toward... | Why |

| Core logic is correct, code is a mess | Refactor with AI assist | 2026's cheapest path for good logic trapped in bad code |

| Hardware is end-of-life, budget is tight | Rehost then revisit | Buys time, defers the hard work |

| Multiple apps need the same capability | Encapsulate first, then Rearchitect | API layer becomes the stepping stone |

| COBOL, talent retiring, regulator watching | Refactor to Java or C# with AI | The CLPS and Accenture playbook |

| The business wants features the current system can't support | Rearchitect in parallel | The Strangler Fig pattern: keep the old running while the new grows |

| A vendor has solved the problem | Replace and redirect engineering | Don't build what can be bought |

| Code is undocumented, team has left | AI-assisted discovery first, decision after | Decide the R after the AI has mapped the estate |

The last row is the one most teams miss. It's hard to pick the right modernization path for a system that hasn't been understood. In 2026, AI-assisted discovery is fast enough and cheap enough that it should almost always come first.

The 8-step implementation playbook

Step 1: Define outcomes and guardrails before touching a model

Pick one workflow instead of a portfolio. One. Write a one-page scorecard: baseline metrics, numeric targets, unacceptable failure modes, latency and cost ceilings with alerting, and escalation paths for when the AI suggests versus when a human decides. Do this before model selection turns into a political fight. It will.

Step 2: Map the system, the data, and the actual work

Org charts lie. Work happens in shadow spreadsheets, Slack DMs, and a senior engineer's head. Build a workflow map with exception paths, a system map with owners, and data lineage for each key field. Clarify system of record (where the truth lives) versus system of action (where people work). Miss this and the result is a bug that reads correctly on every screen and lies in the database.

Step 3: Choose the right first use case

Feasibility comes down to data access, integration surface, risk profile, and evaluability. Good first picks: RAG-based Q&A, grounded summarization, classification, developer assistance, and assistive drafting with human approval. Avoid high-stakes automated decisions and regulator-facing outputs with immature evaluation. Quick test: can the team build a golden set of 50 correct examples in under a week? When no, it's a research project dressed up as a product.

Step 4: Build the data and access foundations

RAG ingestion must clean content, attach metadata, and preserve lineage. Stale retrieval is worse than none because it looks authoritative. Enforce access control at retrieval time; UI-level controls alone will leak. One pilot got killed in week seven because a chatbot quoted a confidential acquisition memo to a junior analyst. Minimize PII in prompts, redact where possible, isolate AI services on the network.

Step 5: Decide the architecture pattern

Three patterns, chosen by how many teams will use the thing:

Pattern | Best when | Main risks |

| Embedded AI | One app owns the workflow | Tight coupling |

| Sidecar service | Several apps share capability | Network hops, API discipline |

| Shared platform | Multiple teams over 12+ months | Upfront cost, multi-tenancy |

Start with Embedded or Sidecar and earn the way to a platform. Building the platform first burns $2M before shipping a single feature. Treat RAG as its own subsystem with dedicated SLOs, evaluation, and threat model.

Step 6: Ship a thin vertical slice

A thin vertical slice runs end-to-end through every layer: UI, business logic, data access, AI service, back to the user. It surfaces real risks before architecture gets locked in. Build evaluation in from day one: offline datasets, regression across prompts and model versions, human review for ambiguous outputs. Keep a working non-AI path behind a feature flag. It will be needed.

Step 7: Productionize with LLMOps and MLOps discipline

Treat prompts, retrieval indexes, and model versions as first-class artifacts that get versioned, reviewed, and deployed like application code. Run CI/CD with staged environments. Monitor latency, error rates, cost, and quality. Use canary releases and shadow mode for model changes. Add red teaming for prompt injection, jailbreaks, and data extraction.

Step 8: Roll out, drive adoption, scale

Phased rollout cuts organizational risk. Narrow cohort first, expand when metrics hold steady, and keep governance gates tied to artifacts rather than opinions. Adoption is the usual bottleneck; trust, workflow mismatch, and UI friction kill more features than quality does. Ship the AI into tools people already use. Track cycle time, rework, error rates, and escalations against a baseline.

The AI tooling landscape for legacy modernization in 2026

The tool market moved faster in the last 18 months than in the five years before it. Here's the honest comparison: strengths, weaknesses, and where each tool earns its keep on legacy code.

Tool | Best at | Mainframe / COBOL? | On-prem / air-gapped | Enterprise compliance | Rough price tier |

GitHub Copilot Enterprise | Broad developer productivity, IDE-embedded | Limited. Strong on Java, weaker on COBOL nuance | No (cloud) | SOC 2, strong enterprise story | $39/user/month |

Claude Code (Anthropic) | Large-context analysis, code exploration, COBOL discovery | Yes. Feb 2026 update adds specific COBOL capability | API-based | SOC 2, HIPAA eligible | Usage-based |

Amazon Q Developer | AWS-native modernization, Java upgrades | Moderate. Stronger on Java modernization than mainframe | No (AWS cloud) | AWS compliance inheritance | From $19/user/month |

IBM watsonx Code Assistant for Z / Project Bob | Mainframe-specific. COBOL to Java on IBM Z | Yes. Purpose-built for IBM Z | Yes (with IBM Z) | IBM compliance portfolio | Enterprise pricing |

Cursor | Multi-file refactoring, large-scale changes | Weak on mainframe | No (cloud) | Improving; check current certifications | $20/user/month+ |

Tabnine | Privacy-sensitive environments, on-prem deployment | Moderate | Yes (on-prem option) | Strong for regulated industries | Enterprise pricing |

Zencoder | Agentic analysis of AS/400 and similar legacy | Yes. ZDNET's PRCA case study | Varies | Emerging | Usage-based |

TCS MasterCraft / TransformPlus | Large-scale COBOL modernization with services wrap | Yes | Yes (on-prem available) | Full enterprise stack | Project-based |

A few things the table doesn't capture.

The right tool is usually two or three tools. Successful enterprise modernization programs rarely run on a single copilot. Real programs pair a discovery-focused tool (Claude Code, Project Bob) with an IDE-embedded coding assistant (Copilot, Cursor, Tabnine) and a testing-focused tool in the CI/CD pipeline. Pick for the job.

"Enterprise-ready" is a spectrum. In regulated industries, the on-prem or air-gapped options matter more than feature parity. A tool that cannot be deployed inside the network cannot be used for the core banking system, no matter how good its benchmarks look.

Build versus buy is real. When IP is sensitive enough or the domain knowledge niche enough, fine-tuning an open-weight model on an internal codebase can be worth the engineering investment. Most organizations should not do this in year one. Maybe in year three.

What it actually costs in 2026

This is the section most competitor content dodges. Here's the honest answer, with ranges, caveats, and a warning about the numbers commonly quoted elsewhere.

SMB contained pilot: $25,000 to $75,000

One workflow. One team. Off-the-shelf tooling. Typically 8 to 12 weeks to a working thin vertical slice. Most of the budget is engineering hours. Tool licensing is cheap at this scale. Cloud costs are rounding error. Works well for things like a support-team knowledge assistant or an internal Q&A tool over a documentation corpus.

Mid-market modernization program: $200,000 to $1.2M per year

Multiple workflows over 6 to 18 months. Typically 2 to 5 engineers dedicated, a product manager, part-time security and data owners. Real money starts going to governance tooling: evaluation platforms, observability, vector databases, prompt versioning. Cloud costs become visible. Expect 30 to 40% of the budget to go to governance, testing, and operations rather than model usage.

Enterprise transformation: $2M to $50M+

Full program. Multiple teams, multiple domains, regulated environment, mainframe or near-mainframe workloads. McKinsey published a case of a large bank modernizing its legacy core system (400 pieces of software, budgeted at more than $600 million) using agent squads supervised by humans. That's the outer edge. Full modernization projects regularly cost anywhere from four to nine million dollars, with some large enterprise systems running far higher for mid-sized enterprise scope.

Where the money goes, roughly, in a mid-to-large program:

- Engineering time: 40 to 55%. Still the biggest line item. AI made engineers faster. It did not make them free.

- Governance, evaluation, observability: 15 to 25%. This is the line item most budgets underestimate by a factor of two.

- Cloud infrastructure: 10 to 20%. Hybrid architectures dominate. By 2026, 92% of enterprises operate in hybrid or multi-cloud environments.

- AI tooling and model usage: 5 to 15%. Smaller than most leaders expect going in.

- Change management, training, communication: 10 to 15%. Underfunded in almost every program.

ROI timelines to expect. IDC reports that organizations achieve an average 2.3x return on agentic AI investments within 13 months, with ROI expected to grow as adoption scales. That's an average. Frontier adopters see more like 2.84x. Laggards see 0.84x, meaning they lose money. The difference comes down to governance and adoption discipline.

A number to treat with some skepticism: the "60 to 70% time reduction" figures in vendor case studies. They're often real for the narrow phase being measured (e.g., code translation) and misleading when extrapolated to the whole project. AI tools reducing the time required for a complex modernization project by 40 to 50% end-to-end is closer to what shows up in practice. Still transformative.

SMB versus enterprise: two different playbooks

Most content on this topic reads like it was written for a Fortune 500 CIO. For a 40-person company or a 300-person one, the advice to "build an AI center of excellence" and "appoint an AI Ethics Officer" is unrealistic. It's also the wrong strategy.

For SMBs (under ~500 employees)

- Use off-the-shelf. Aggressively. GitHub Copilot, Claude for API use, a standard RAG framework. Avoid building platforms that won't get reused.

- Pick one workflow with clear ROI. Support automation, document intelligence, developer productivity, sales research.

- Skip the agentic mesh conversation. Orchestration across ten agents isn't the priority. One workflow that works is.

- Budget $25K to $75K for the first pilot. When that isn't available, find the workflow that will pay for it in 90 days.

- The governance document is a page long. Classifications, access rules, escalation paths. Sufficient beats comprehensive.

For enterprises (1,000+ employees, regulated, global)

- Centralize the platform. Decentralize the use cases. Shared retrieval, shared evaluation, shared observability. Domain teams ship their own features on top.

- Get legal and compliance into the first design review. With Gartner predicting 2,000+ "death by AI" legal claims by the end of 2026, retroactive compliance has stopped being a viable strategy.

- Map to a real governance framework. NIST AI RMF. ISO 42001. EU AI Act category. Inventing something internal and hoping it holds up is a losing bet.

- Budget for red teaming. A specialist firm, not the internal pen testers. AI-specific attack surfaces (prompt injection, data extraction, model evasion) require specialist skills.

- Expect agentic AI to force operating-model change. McKinsey's 2026 research found that around 75% of current roles will need to be reshaped as AI embeds across workflows. That's an HR conversation.

Industry spotlights: what this looks like in practice

Banking and financial services

The COBOL cliff is sharpest here. CLPS Incorporation launched an AI-driven COBOL-to-Java migration solution in March 2026 and completed a PoC for a major bank in Hong Kong, demonstrating that AI-enabled migration could rapidly and accurately convert COBOL code to Java while maintaining system stability. Accenture built a similar tool using GPT-4 and a vector database that reverse-engineers COBOL code to provide developers with technical and functional documentation needed to accelerate modernization. In its article "AI for IT modernization: Faster, cheaper, better," McKinsey shares a case study around a FinTech company that needed to modernize 20,000 lines of code estimated at 700 to 800 hours. After deploying genAI agents, the business cut that number by 40%.

The pattern: AI for discovery and translation, humans for behavioral equivalence validation and regulatory reporting. Never skip the validation step. A credit system that produces "mostly correct" answers is a credit system that produces fines.

Healthcare

EHR modernization has a regulatory overlay that changes the calculus. HIPAA audit trails, PHI handling, interoperability standards. Several hospital networks have run excellent AI pilots that stalled at production because the evaluation data couldn't leave the SOC 2 environment. Build that in from week one, or don't build.

Where AI is earning its keep: clinical documentation summarization, prior-authorization automation, imaging workflow triage. Where it's still too risky for most: anything that touches a clinical decision without explicit physician sign-off.

Retail and logistics

Less regulation, faster iteration, more room for experimentation. Demand forecasting, dynamic pricing, inventory AI over legacy ERP systems. A lot of this starts as RAG over product catalogs and operational documents, then expands as trust builds.

A practical lesson from one retailer: start with the thing the team already does badly. For them it was category-code classification, a boring, high-volume, low-stakes task that AI could 10x. The flashy personalization engine came later. It would not have worked first.

Manufacturing

PLC modernization, predictive maintenance, shop-floor documentation. Long asset lifecycles (decades) make this space distinctive. A German automotive supplier modernized its legacy PLC-based production line by using AI to refactor control logic into cloud-native microservices, deploying autonomous agents to detect and resolve assembly line bottlenecks, and integrating with SAP S/4HANA for real-time supply chain adjustments.

The failure modes that sink AI modernization programs

Enough modernization programs have died to categorize the deaths. Here are the five patterns that recur, and what to do about each.

1. Data gaps discovered mid-build. Teams commit to a timeline based on a data landscape that doesn't exist. Fix: do a real data audit in week one. Sample actual production data. Ask the security team what they'd block before designing around it.

2. Fragile legacy integrations that break under load. Pilot works. Production doesn't. Fix: load-test the integration surface before the AI feature ships. Assume the legacy system will do weird things under 10x traffic.

3. Missing or theatrical evaluation. Value becomes unprovable, regressions invisible. Fix: build the golden set before building the feature. 50 examples minimum. Refresh monthly.

4. Security review started too late. Launch gets blocked in month five because someone finally asked about data residency. Fix: invite security to week one. Non-negotiable.

5. Poor workflow fit, weak adoption. Feature ships, nobody uses it. Fix: spend real time with the users before designing the AI behavior.

A practical technique that saves programs: the pre-mortem. Before starting, assume the project failed. Write the post-mortem. What killed it? Then turn those causes into gates. Define kill criteria, the metrics that would mean "stop working on this." Most teams never define them, and then it becomes politically impossible to stop a project that's obviously failing. Being the team that defined them is the difference between a disciplined exit and sunk-cost theater.

What "modernized" actually looks like in production

The best sign of a successful AI modernization: the system is boring.

Observable. Auditable. Recoverable. Not exciting. The drama of the pilot has been replaced by dashboards, runbooks, and a change process that treats prompts like production code.

Artifacts to be able to show on demand:

- Architecture diagrams showing where the AI service sits relative to legacy and modern components

- Data lineage and RAG flow diagrams, including ingestion, indexing, and retrieval boundaries

- Evaluation reports with datasets, rubrics, thresholds, results, and regression history

- Dashboards for latency, error rates, cost, and quality indicators

- Runbooks for AI incidents, including rollback steps and stakeholder communication

Signals that matter:

- SLO attainment, including error budgets for the AI service

- Quality indicators: evaluation scores, override rates, escalation frequency, safety filter trigger rates

- Retrieval health: hit rates, retrieval latency, drift in corpus composition

- Change traceability: what changed (prompt, model, index, data), when, who approved it

Before expanding scope, verify four things actually work: evaluation is real and repeatable, security boundaries hold end-to-end (including at retrieval time), monitoring catches regressions quickly, and rollback has been practiced under load.

Frequently asked questions

1. What is AI-powered legacy system modernization?

It's the use of AI tools (LLMs, copilots, retrieval systems, agents) to accelerate moving legacy applications to cloud-ready architectures. AI handles code analysis, translation, and test generation; humans retain architecture and compliance decisions.

2. How long does it take to modernize a legacy system with AI?

SMB pilots ship a working slice in 8 to 12 weeks. Mid-market programs run 6 to 18 months. Enterprise transformations take 2 to 5 years, roughly 40 to 50% faster than pre-2024 projects.

3. How much does AI legacy modernization cost in 2026?

SMB pilots run $25K to $75K. Mid-market programs cost $200K to $1.2M per year. Enterprise transformations hit $2M to $50M+, with McKinsey documenting one bank at $600M. Budget 15 to 25% for governance.

4. Can AI really translate COBOL to Java accurately?

Yes, for discovery, documentation, and initial translation. IBM's Project Bob, Anthropic's Claude Code, CLPS, and Accenture have working tools. Behavioral equivalence validation, testing, and mainframe-specific non-functional handling still require human engineers.

5. What are the 7 R's of application modernization?

Encapsulate (wrap in APIs), Rehost (lift-and-shift), Replatform (shift runtime), Refactor (restructure code), Rearchitect (redesign), Rebuild (rewrite from scratch), and Replace (retire and buy). Most programs combine two or three.

6. How do teams choose between refactoring, rebuilding, and replacing?

Refactor when logic is correct but code is messy. Rebuild when code is unsalvageable and requirements are well understood. Replace when a vendor already solved the problem. Run AI-assisted discovery first, then decide.

7. What is agentic AI and how does it apply to modernization?

Agentic AI systems plan multi-step workflows and orchestrate tasks autonomously. In modernization, agent squads handle documentation, code translation, review, and testing. Gartner predicts 70% of enterprises will deploy them in IT operations by 2029.

8. What are the biggest risks of using AI to modernize legacy code?

Prompt injection via retrieved content, data leaks through unfiltered retrieval, AI code that preserves original bugs, hallucinated business rules, and audit trails that fail compliance review. Governance designed in early mitigates all five.

9. How do regulated industries handle AI-generated code audit requirements?

Treat prompts, model versions, and retrieval indexes as production code with version control. Log every AI-assisted change and approval. Use human-in-the-loop for regulated workflows. Map to NIST AI RMF, ISO 42001, or EU AI Act.

10. What's the difference between AI modernization and digital transformation?

Digital transformation is the whole program: customer experience, new business models, operating models. AI modernization is one slice of that: updating the technical foundation. Legacy systems eventually cap every ambition, so transformation requires modernization underneath.

Where to start this week

If only one thing gets done before next Monday, let it be this: pick a single workflow, write the one-page outcome scorecard, and produce a first-draft system, data, and access map for that workflow.

Those three artifacts will say more about readiness than any vendor demo will. They're free to build. And they will save the team from the most common mistake in this space: funding a pilot before knowing whether the foundation supports it.

The companies that win the next decade aren't the ones with the best model. They're the ones that shipped boring, observable, auditable AI into one workflow, proved it worked, and earned the right to ship the next one.

Which workflow is yours?