We put excellence, value and quality above all - and it shows

A Technology Partnership That Goes Beyond Code

“Arbisoft has been my most trusted technology partner for now over 15 years. Arbisoft has very unique methods of recruiting and training, and the results demonstrate that. They have great teams, great positive attitudes and great communication.”

Dedicated Team vs Staff Aug vs Fixed Scope for Custom Software Development (US): How to Choose

If you are a VP Engineering or Head of Product at a US mid market company, choosing an engagement model is not paperwork. It sets the operating system for delivery: who makes which decisions, how changes get handled, and where risk actually sits.

This guide compares Dedicated Team vs Staff Augmentation vs Fixed Scope (often fixed scope fixed price) using a trade off lens. If you want the broader end to end selection flow, start with the buyer's guide.

Why choosing the right software development engagement model matters

This section helps you understand the stakes so you can treat the engagement model as a delivery decision. The right choice reduces rework, renegotiations, and internal friction. The wrong choice can lock you into slow change control or expose you to more management load than you planned.

In practice, procurement and legal will negotiate very differently depending on whether you are buying deliverables, capacity, or individual people. Security and compliance will also care, especially when external engineers need deep access to your environments and Software Development Life Cycle (SDLC).

By the end of this section, you should be able to explain to stakeholders why the model choice affects budget predictability, time to first release, and how painful mid project learning will be.

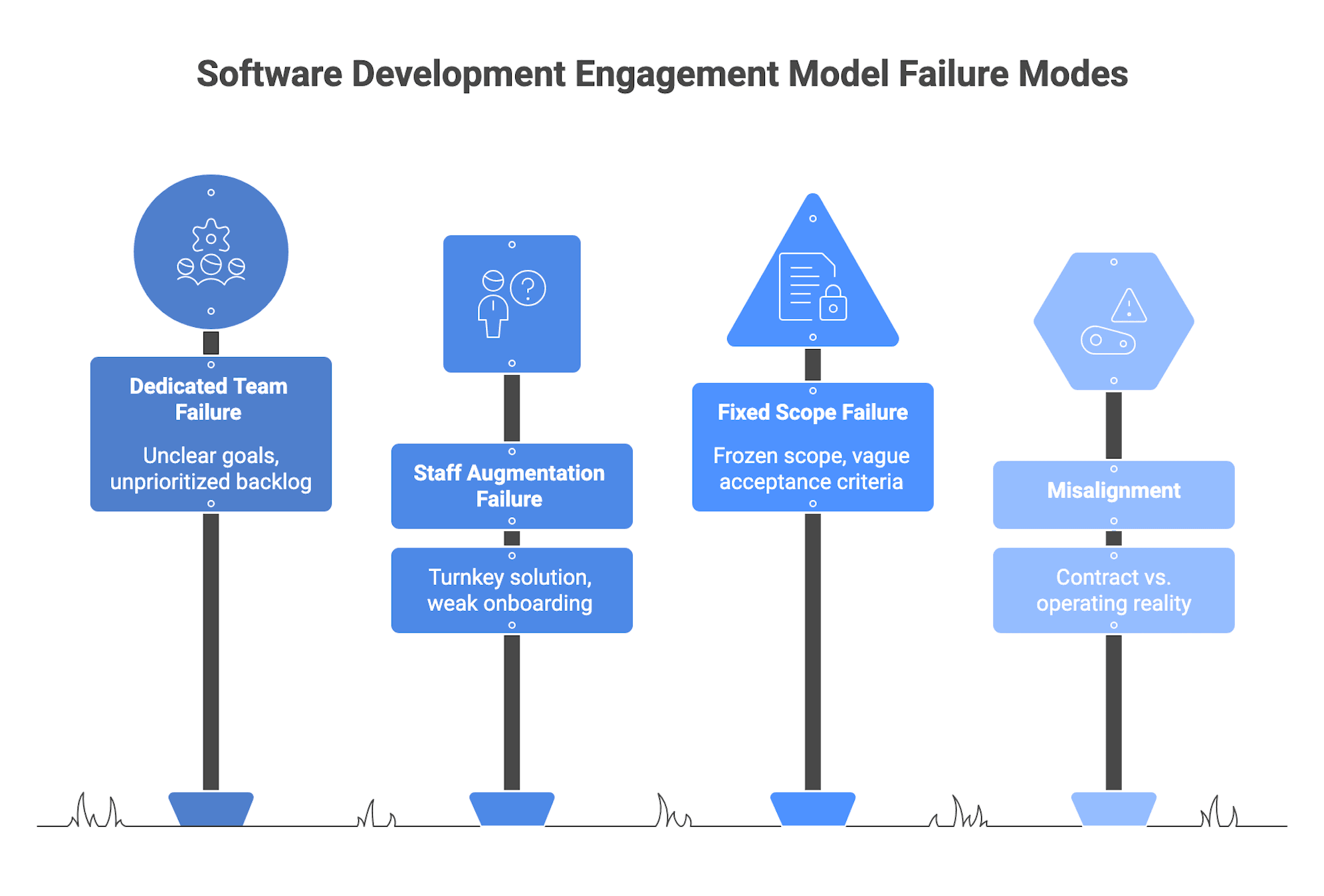

What goes wrong when the model does not match reality

Most failure patterns come from mismatch on either side:

- A rigid commercial structure paired with high uncertainty

- A flexible model paired with weak internal governance

Fixed scope projects with fuzzy or evolving requirements tend to degrade into scope creep and change order battles. Every new insight becomes a negotiation instead of a backlog decision, which can stall delivery and erode trust.

The inverse shows up with Staff Augmentation. If you add people but lack strong product ownership or delivery management, augmented engineers can be busy but misaligned. You carry the full burden of coordination, and output can drift into “hours consumed” reporting instead of outcomes.

Dedicated teams bring more vendor side delivery structure, but they still fail without governance. If you do not have a clear product owner, decision latency and ambiguous acceptance criteria can show up quickly.

Early warning signs in the first two to six weeks often include:

- Sprint reviews with little working software

- Repeated disagreements on what “done” means

- A growing list of “parked” change requests

- Status reports that emphasize hours and activity over outcomes

Corrective actions tend to be straightforward, but they must be explicit:

- Tighten Acceptance Criteria and agree on a Definition of Done

- Formalize a change order process for fixed scope

- Appoint or empower a product owner for team based models

- Define decision rights and escalation paths so decisions do not stall

If you already recognize these patterns internally, treat them as input into model choice, not as issues you will “fix later” after signing.

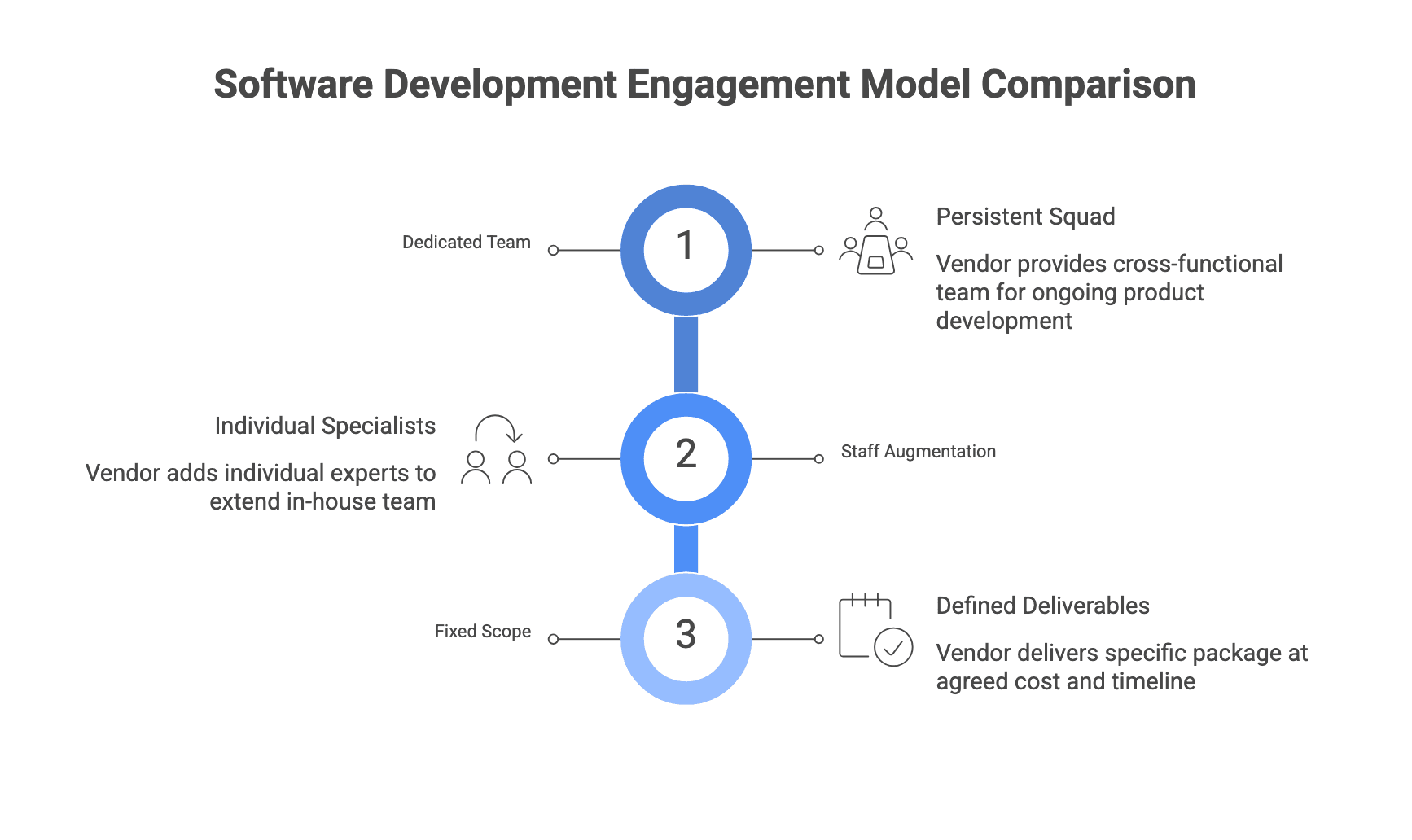

The simplest way to think about the three models

A clean mental model is: what you pay for, and who owns delivery.

- Dedicated Team is capacity based and vendor managed delivery. You buy a cross functional team over time, often on Time and Materials (T&M) or a monthly retainer tied to capacity. You own product direction, while the vendor runs execution.

- Staff Augmentation is renting individual specialists who plug into your team. They work in your tools and processes under your managers. You retain control and accountability for delivery.

- Fixed Scope is deliverable based. You contract for defined outputs at an agreed price and timeline, with a detailed Statement of Work (SOW), Acceptance Criteria, and a formal Change Request and change order process.

One recurring issue in US RFPs is “label drift.” Vendors sometimes call a capacity arrangement “fixed scope” or a managed team “staff aug.” The sanity check is simple: are you paying for named individuals, a reserved team, or specific deliverables, and who is actually responsible for day to day delivery?

At this point, you should be able to state the model choice in one sentence: “We are buying deliverables” or “We are buying capacity” or “We are buying people.”

Dedicated team vs Staff augmentation vs Fixed scope

This section helps you compare what changes in practice: governance, change handling, accountability, and management load. The goal is to find the best fit for your specific project reality.

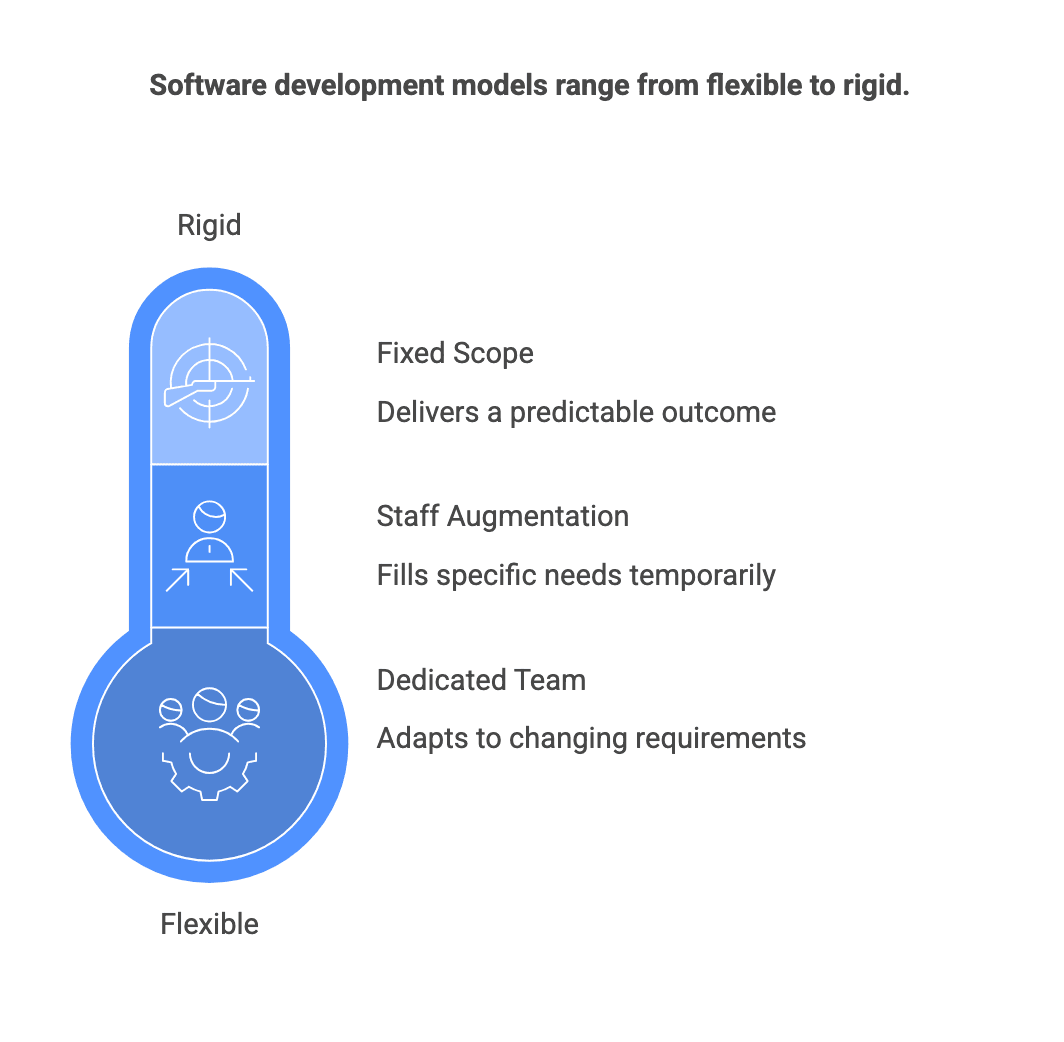

As you read, keep one question open: do you need flexibility most, or predictability most, and do you have the internal bandwidth to run delivery?

Engagement Model Definitions (Buyer-Friendly): What Each Outsourcing Model Really Means

Dedicated Team

You pay for a persistent, cross functional squad allocated primarily or exclusively to your product. The vendor typically supplies delivery roles like a delivery manager, Scrum Master, Quality Assurance (QA) lead, and engineering leadership. You provide a product owner and stakeholders. Commercially this is often T&M or a monthly retainer tied to team capacity, with governance and ways of working defined, but deliverables.

Staff Augmentation

You pay for individual people days or full time equivalents to extend your in house team. Augmented staff work inside your processes, tools, and ceremonies under your managers. The contract is usually role definitions, rate cards, and assignment terms more than a feature level SOW. You supply product management and technical leadership, and accountability remains internal.

Fixed Scope (fixed scope fixed price)

You pay for specific deliverables at an agreed cost and timeline. The SOW typically includes functional and non functional requirements, Acceptance Criteria, acceptance testing process, a change order mechanism, and defect or warranty terms. You participate in reviews and acceptance and provide subject matter expertise, but the vendor is contractually responsible for delivering the agreed package.

If a proposal feels ambiguous, ask the vendor to walk through a real example of how a Change Request is initiated, estimated, approved, and accepted under their model.

Summary of differences

This comparison is a shortcut for stakeholder alignment. Use it to surface where your team will spend time and where risk concentrates.

Model | You pay for | Who runs delivery | How changes work |

| Dedicated Team | Team capacity over time | Vendor runs execution; you steer product | Reprioritize backlog; cost driven by time and team size |

| Staff Augmentation | Individual specialists | You run delivery | You reprioritize; you manage coordination and quality |

| Fixed Scope | Defined deliverables | Vendor runs execution to SOW | Change Requests become change orders with cost and schedule impact |

Two practical implications often surprise buyers:

- Acceptance looks different. Dedicated team and staff aug tend to accept work continuously via demos, Definition of Done, and environment based testing. Fixed scope relies more on formal acceptance steps and sign offs.

- Continuity differs. Dedicated teams are built for ongoing continuity and domain learning. Fixed scope teams may disband after delivery, which makes handover artifacts and knowledge transfer more important.

If your stakeholders disagree on “who owns quality” or “who owns delivery,” resolve that before comparing rate cards or timelines.

Best-fit scenarios for each model

This section helps you map real situations to the model that tends to fit, including counterexamples so you do not over apply a pattern.

Dedicated Team tends to fit when:

- Your roadmap will evolve, and you expect learning and iteration

- You want continuity and deeper domain knowledge over multiple releases

- You can provide product ownership, but you do not want to manage every engineer day to day

Micro scenario: a mid market SaaS platform modernizing a core workflow while learning from user feedback each sprint. Requirements will change. You need steady throughput and fast reprioritization.

Counterexample: a one off, tightly constrained build with no planned evolution, where a long lived team would be under utilized after delivery.

Staff Augmentation tends to fit when:

- You have strong internal product and engineering leadership

- You need capacity fast or a specific skill gap for a period of time

- The work must embed deeply into your repos, pipelines, and internal standards

Micro scenario: an internal platform team needs a cloud specialist and a mobile engineer for a set of milestones, but wants to keep full control of architecture and release decisions.

Counterexample: using staff aug as a substitute for product ownership or delivery management. If your internal leadership is already stretched, adding more people can increase coordination cost and slow decisions.

Fixed Scope tends to fit when:

- Scope can be specified with reasonable completeness and change is expected to be limited

- You need upfront budget predictability for a defined package of work

- You are prepared to invest in detailed Acceptance Criteria and disciplined change control

Micro scenario: a compliance related module with stable requirements and a clear acceptance test plan, where the definition of “done” can be written in testable terms.

Counterexample: a build that touches legacy systems with unknown complexity and shifting stakeholder opinions. “Predictability” on paper can become a sequence of change orders and tense renegotiations.

If you cannot describe your project as “evolving product” or “well specified package,” treat that ambiguity as a signal to consider a discovery first approach.

Decision Criteria for US Mid-Market Software Outsourcing

This section helps you evaluate fit based on project reality.

These criteria show up consistently across practical guidance.

- Requirements maturity and volatility

Stable and documented favors fixed scope. Emerging and iterative favors team based models.

- Governance capacity

Staff augmentation assumes you can run backlog, quality, and day to day delivery. Dedicated teams reduce that burden but still require an engaged product owner. Fixed scope shifts effort into upfront specification and formal acceptance.

- Integration depth

Deep integration into your repos, CI/CD, observability, and incident processes aligns well with staff augmentation or a tightly integrated dedicated team. More isolated modules can sometimes fit fixed scope.

- Urgency and time to start

Staff augmentation is often fastest to add capacity. Dedicated team and fixed scope usually need more upfront ramp up or discovery.

- Risk appetite and decision latency

If decisions are slow or fragmented, team based models can drift. If you choose fixed scope without disciplined change control, hidden costs and disputes become more likely.

A useful way to use these criteria is to score your project honestly, then pick the model that matches your strongest constraints rather than your preferred contract type.

Trade-offs and failure modes

This section helps you avoid “model theater,” where the contract says one thing but delivery runs like another. Each model has predictable ways it fails.

Dedicated Team failure modes

- The engagement becomes expensive staff augmentation because goals are unclear

- The backlog is unprioritized, so throughput looks low and value is hard to show

- Product ownership is inconsistent, causing decision latency and rework

Early signs include sporadic backlog grooming and sprint reviews that defer acceptance repeatedly.

Staff Augmentation failure modes

- Augmented staff are treated as a turnkey delivery solution

- Onboarding is weak, so engineers work in silos and integration pain appears later

- Internal teams complain about management overhead without throughput gains

Mitigations include defining a RACI matrix (Responsible, Accountable, Consulted, Informed), standardizing coding and review practices, and ensuring an internal lead owns outcomes.

Fixed Scope failure modes

- Scope is frozen on shaky ground

- Acceptance Criteria are vague or not testable

- Change control becomes informal, creating disputes and scope creep

Early signs include long debates about requirement interpretation, rapid emergence of “must have” changes, and pressure to squeeze in extra work without formal change orders.

Across all three, the most common cross cutting failure is misalignment between contract mechanics and operating reality. The fix is to align contract language, governance, and expectations so they describe the same model.

If you can only fix one thing to reduce failure risk, fix decision rights: who can approve scope changes, who can accept work, and how fast decisions will happen.

Recommendation logic (context-driven)

This section gives conditional rules you can use to make a defensible choice.

- If requirements are still evolving and you expect to iterate based on user feedback, a Dedicated Team is usually safer because the model is designed for reprioritization.

- If you have strong internal leadership and the main problem is capacity or a specific skill gap, Staff Augmentation is often the most pragmatic option, especially for deeply embedded work.

- If scope is narrow, well specified, and tied to external constraints like a compliance deadline, Fixed Scope can fit, as long as you accept tighter change control and invest in contract detail.

- If uncertainty is high across multiple dimensions (scope, technology, stakeholders), consider discovery first using a smaller fixed scope or time boxed engagement to clarify requirements, de risk architecture, and produce a backlog and roadmap before choosing a long term model.

Notice what is not in these rules: “fixed scope is safest” or “dedicated team is always better.” The model is only as strong as its prerequisites.

Before you lock in, write down which prerequisites you are confident you already have, and which you still need to establish.

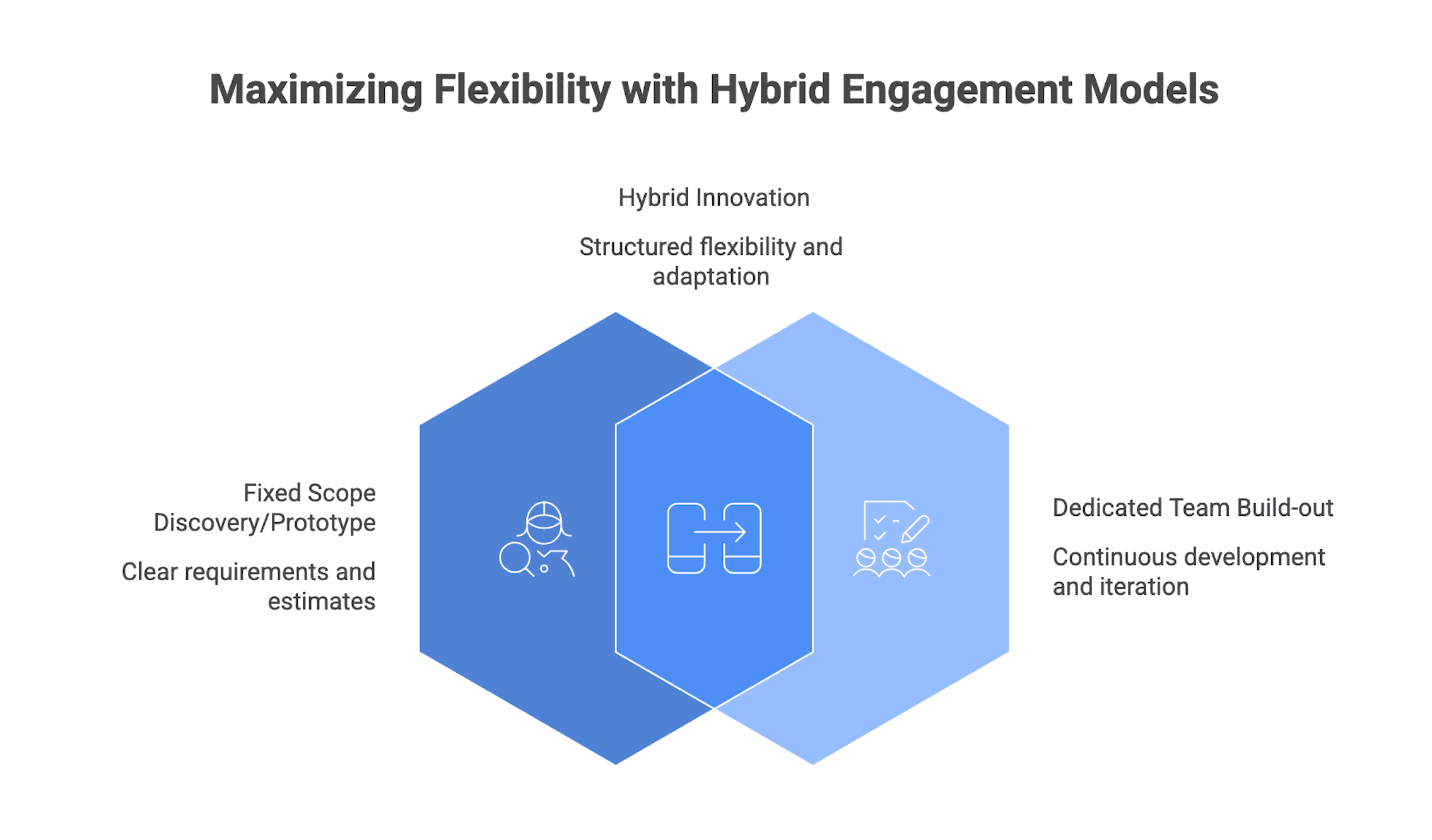

Common hybrid approaches

This section helps you use hybrids intentionally.

Two patterns show up often:

- Fixed scope discovery or prototype, then Dedicated Team for build out

Discovery produces clearer requirements, a more grounded backlog, and a better estimate basis. Then the dedicated team supports ongoing iteration once the biggest unknowns are resolved. - Dedicated core team, supplemented by Staff Augmentation for short spikes

For example, add a DevOps or data specialist for part of the roadmap without committing to that role long term.

Hybrids create friction when accountability is unclear. If one vendor delivers a fixed scope pilot while another runs a dedicated team, integration defects can trigger finger pointing unless handover responsibilities are explicit.

If you plan a hybrid, consider structuring phases as separate SOWs under a Master Services Agreement (MSA), with clear acceptance criteria, exit conditions, and a governance plan that ties phases together. Also plan for coordination cost: integrated backlogs, cross vendor alignment on standards, and a named internal owner to manage the whole system.

A hybrid should make the decision more reversible.

What to evaluate before you pick an engagement model

This section helps you diagnose your reality before you pick a model. The fastest way to make a bad choice is to assume you have stable scope, or assume you have management bandwidth, without evidence.

Treat these as internal alignment questions you can answer in a single working session with product, engineering, and a procurement or legal partner.

Requirements maturity and scope volatility

This is the first gate because fixed scope only works when “fixed” is realistic.

Indicators you are closer to stable scope:

- Requirements are documented and stakeholders largely agree on priorities

- Dependencies and integrations are well understood

- The solution space is familiar (similar to previous systems)

Indicators scope is volatile:

- Stakeholders change their minds frequently

- User needs are still being discovered

- Legacy system complexity is uncertain or undocumented

When uncertainty is high, structured discovery can help. Common discovery activities include stakeholder interviews, process mapping, technical spikes, and prototyping. Typical outputs are a scoped backlog, architecture options, a risk register, and more grounded estimates. Those outputs can then support either a better fixed scope SOW or a more confident dedicated team plan.

If you cannot write Acceptance Criteria that are testable, assume you are not ready for full fixed scope.

Delivery ownership and management bandwidth

This gate determines whether staff augmentation is realistic, and how much leverage a dedicated team will actually provide.

Reality by model:

- In Staff Augmentation, you own backlog management, task assignment, quality standards, and release decisions. Augmented staff contribute to your system, but they do not replace management.

- In a Dedicated Team, the vendor typically runs ceremonies, tracking, and risk management, while you still provide product ownership and participate in prioritization and reviews.

- In Fixed Scope, the vendor is responsible for delivering the agreed outputs, but you invest heavily upfront in specification and later in review, testing, and formal acceptance.

If your product owner and engineering leads are already overburdened, staff augmentation can worsen decision latency and create idle or misdirected work. In that case, a dedicated team or a discovery first phase may be safer.

A simple internal test is to estimate weekly time commitment for product ownership and delivery decisions. If you cannot staff that time, the model will suffer.

Team integration needs (tooling, access, and ways of working)

This gate is about operational reality: access, onboarding, and the cost of integrating external engineers into your SDLC.

In staff augmentation and dedicated team models, external personnel often need:

- Access to code repositories and CI/CD pipelines

- Access to issue trackers, documentation, and observability tools

- Participation in SDLC gates, release processes, and sometimes incident processes

That implies onboarding into identity and access management, least privilege access, and alignment with standards like branching strategy, code review practices, and testing expectations.

Fixed scope can sometimes be structured with the vendor using their own tooling and handing off at milestones, but integration projects eventually need shared interfaces and test environments. In regulated contexts, security and compliance often impose controls regardless of model.

If your organization has constrained access patterns or slow onboarding, factor that into ramp up time and delivery risk.

Before choosing, align on “ways of working” basics: Definition of Done, testing expectations, code review, and release criteria.

Risk allocation in contracts (change control and acceptance)

This gate translates contract mechanics into outcomes. It matters because “risk transfer” is often assumed, but rarely free.

In fixed scope agreements, change order provisions are central:

- Any change to scope, specifications, deliverables, or timeline is requested in writing

- The vendor evaluates impact

- You approve cost and schedule adjustments before work proceeds

Acceptance clauses typically specify objective criteria, testing procedures, acceptance periods, and remediation steps if defects are found. Some contracts include deemed acceptance if you do not respond within a defined window.

In capacity based models (dedicated team and staff augmentation), contracts focus more on roles, rates, time tracking, termination or notice periods, and responsibilities. Change control is less rigid because scope is expected to evolve. Risk is managed through transparency, governance, and the ability to adjust team size.

Across all models, pay attention to:

- Intellectual property ownership and license terms

- Confidentiality and data handling (often under an NDA)

- Substitution rights for personnel

- Warranty and defect terms post acceptance

- Termination rights and handover obligations, including knowledge transfer

If you cannot explain how a Change Request updates acceptance criteria and timeline, assume you have a contract ambiguity that will surface later.

Cost predictability vs total cost of change

This gate helps you set expectations with finance and procurement.

Fixed scope often provides high predictability at the outset: you know the contracted cost for the defined scope. But total cost can rise when requirements evolve, because each change becomes a negotiation with fees and schedule impact. There is also a risk that a vendor protects margin by cutting corners if estimates prove optimistic, which can show up later as technical debt and higher maintenance cost.

Time and Materials models (dedicated team and staff augmentation) have more variable total cost because spend depends on duration and team size. They can be more efficient when you need to adapt scope frequently because you are not renegotiating every change, but you need budget guardrails and outcome based reporting.

At minimum, align internally on what “predictability” means: predictable monthly burn, predictable delivery milestones, or predictable total project cost. Different models optimize different versions of predictability.

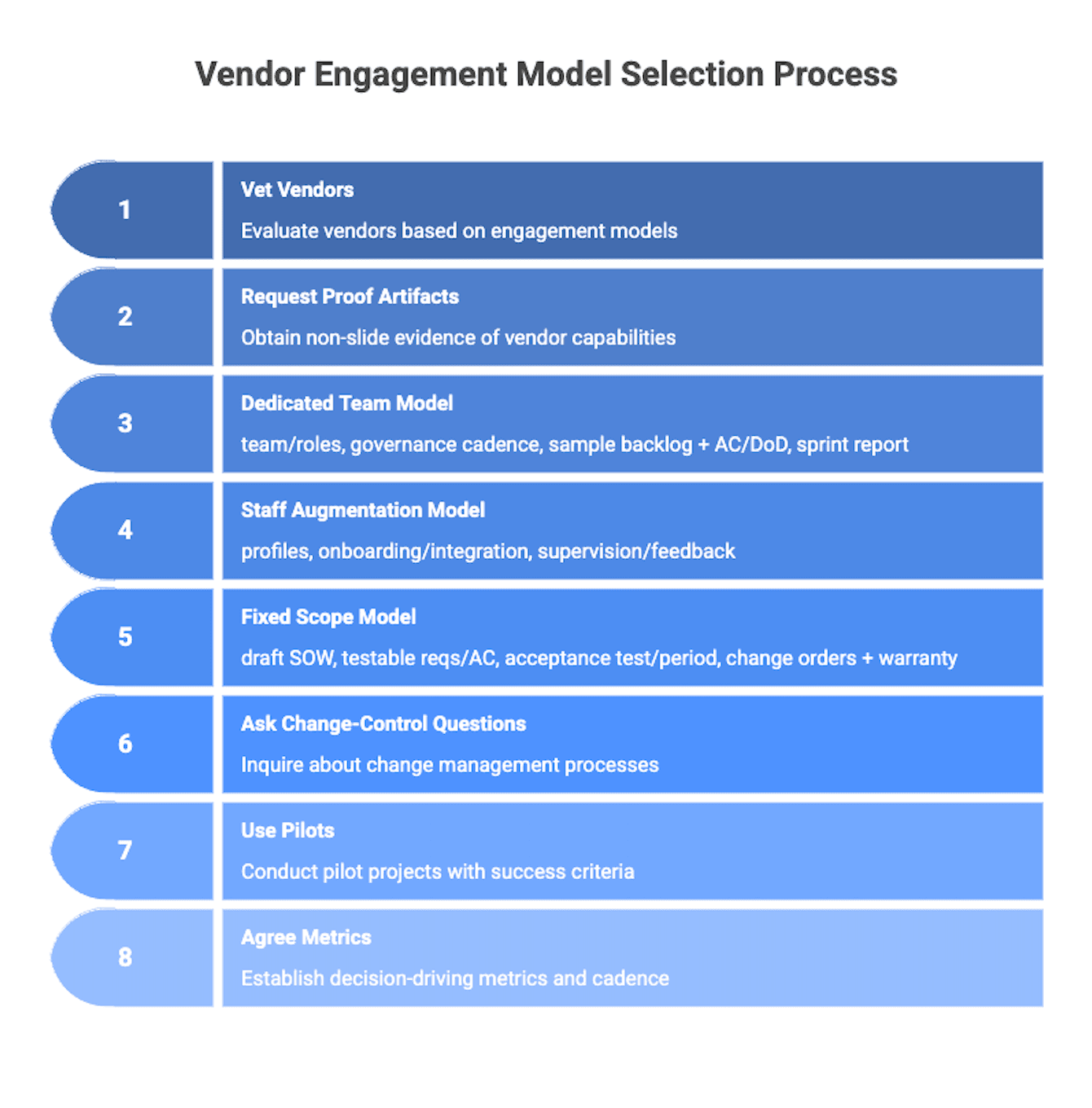

How to Vet Software Development Vendors by Engagement Model

This section helps you turn preference into verification. Most model failures are visible early if you request the right artifacts and ask the right questions.

The goal is to confirm they actually run the model they are selling, and that you can support it with your governance and access realities.

Artifacts to request

Ask for artifacts that prove operating capability instead of marketing slides.

Dedicated Team:

- Proposed team composition and role descriptions

- Governance plan: ceremonies, reporting cadence, escalation paths

- Sample backlog with Acceptance Criteria and Definition of Done

- Example sprint report or dashboard that shows outcomes

Staff Augmentation:

- Sample profiles and role definitions tied to your needs

- Onboarding and integration plan: tools, standards, access, and expected ramp up

- Clarity on who supervises day to day work and how performance feedback works

Fixed Scope:

- A draft SOW with scope, assumptions, dependencies, and exclusions

- Functional and non functional requirements and Acceptance Criteria that are testable

- Acceptance testing procedure and acceptance period details

- Change order process and defect or warranty terms

Across all models, ask for QA artifacts: test strategy outline, release criteria, and how defects are handled after acceptance or release.

If a vendor cannot provide model appropriate artifacts early, treat that as a risk signal.

Questions to ask in vendor calls (and what good answers include)

Use questions that force a walkthrough of real process.

On change control:

- “How do you handle changes in requirements after we start?”

- “Walk through a recent Change Request. What happened to timeline and cost?”

- “Who approves changes, and how fast do you expect decisions?”

Good answers reference a disciplined written process, impact analysis, and options.

On delivery ownership:

- “Who runs day to day delivery on your side and on ours?”

- “What decisions do you need from our product owner each week?”

- “What does escalation look like when decisions block progress?”

Good answers include clear decision rights and a governance cadence.

On transparency and quality:

- “What reports and metrics will we see each week or sprint?”

- “How do you handle defects found after acceptance or after release?”

- “How do you define done, and how do you enforce it?”

Strong answers talk about incremental demos, defect trends, and quality gates.

If the vendor’s answers are vague, assume the model will drift in execution.

Pilot options, trial periods, and exit ramps

If uncertainty is high or collaboration risk feels meaningful, a small pilot can reduce lock in.

Examples of pilots by model:

- For dedicated teams or staff augmentation: one or two sprints on a contained feature with clear success criteria

- For fixed scope: a limited discovery or prototype phase with defined outputs

A thoughtful pilot has:

- Clear success criteria and a decision point to expand or stop

- Short initial terms

- Explicit exit and handover provisions, including documentation and knowledge transfer expectations

US buyers often negotiate termination for convenience with notice periods, plus handover obligations such as repository transfer, runbooks, architecture diagrams, and transition sessions. These terms matter more in long term engagements, but they are also useful in shorter pilots.

If you cannot design a reversible first step, you are taking more lock in risk than you need.

Success metrics and reporting cadence

Metrics are only useful if they drive decisions. Agree upfront on what will be reported, how often, and who interprets it.

For agile team based delivery (dedicated team and staff augmentation), commonly useful signals include:

- Throughput and cycle time trends

- Predictability: forecast accuracy and commitments met

- Quality: defect trends and escaped defects

- Stakeholder satisfaction and decision latency

For fixed scope, milestone tracking matters, but it is not enough without quality visibility. Status that only reports percent complete can hide risk until acceptance.

Avoid vanity dashboards. If a metric does not lead to a decision, replace it.

Once you align on reporting cadence and success signals, you can spot drift early and adjust team size, scope, or governance before problems compound.

Quick Selection Method (then verify)

This section is a fast path to a defensible choice. It is designed for an internal alignment session where engineering, product, and procurement want a clear recommendation and a short list of verification actions.

Use this framework to narrow to a likely model, then validate with artifacts and a pilot where needed.

The “if X then Y” decision tree

Start with three questions: scope stability, delivery ownership, and integration depth.

- How stable is scope?

- If scope is stable and testable, and you want a fixed budget, shortlist Fixed Scope.

- If scope is evolving or you expect learning, shortlist Dedicated Team or Staff Augmentation.

- Who will own day to day delivery?

- If you have strong internal leadership and want maximum control, shortlist Staff Augmentation.

- If you can provide product ownership but want vendor side delivery management, shortlist Dedicated Team.

- How tightly must work integrate with your systems?

- If work must embed into your repos, pipelines, and standards, Staff Augmentation or a tightly integrated Dedicated Team tends to fit.

- If work can be more isolated and handed off as deliverables, Fixed Scope may be manageable.

Edge case rule: if uncertainty is high across scope and technical risk, start with discovery first, then re evaluate.

Once you pick a likely model, convert it into concrete requests: governance plan, SOW detail, onboarding plan, and clear decision rights.

Disqualifiers and red flags (model-specific)

This section prevents obvious misfits.

Fixed Scope red flags:

- Acceptance Criteria are not testable yet

- Stakeholders frequently change priorities or interpretation of requirements

- You do not have disciplined change control capability

- The SOW is vague on assumptions, exclusions, and acceptance process

Staff Augmentation red flags:

- There is no named internal owner to manage augmented staff

- Onboarding and engineering standards are immature

- The business expects the provider to “own outcomes” despite the staff aug structure

Dedicated Team red flags:

- You cannot provide a consistent product owner

- Governance cadence is undefined (planning, demos, retrospectives)

- Tooling and access constraints prevent efficient work

- You want fixed price predictability but also want continuous reprioritization

If any disqualifier applies, either choose a different model or fix the prerequisite first.

What to do if you are still on the fence

When uncertainty remains high, the safest next step is usually to start smaller and make the decision reversible.

A common approach is a time boxed discovery or proof of concept that focuses on:

- Clarifying requirements and Acceptance Criteria

- Exploring technical risks and integration complexity

- Producing a scoped backlog and delivery plan

- Testing collaboration and governance in practice

During this phase, observe how decisions get made, how transparent reporting is, and how quality is handled. Then re apply the criteria with better information. If the evidence points to a longer build, move from discovery into a dedicated team or staff augmentation plan. If scope stabilizes, a better fixed scope SOW may become realistic.

The goal is to avoid committing to a long term model before you have enough evidence.

Conclusion

The core trade off is consistent: you are balancing predictability vs flexibility, and internal governance capacity vs outsourced delivery risk. Dedicated teams and staff augmentation give adaptability and tighter integration, but require sustained product ownership and management attention. Fixed scope offers upfront budget certainty and clearer risk transfer on a defined package, but demands mature requirements and disciplined change control.

A defensible next step is to pick a likely model using the decision tree, then verify fit before signing. Concretely, that means requesting model appropriate artifacts (SOW detail, governance plan, backlog with Acceptance Criteria), asking process questions about change control and delivery ownership, and using a small pilot or discovery phase when uncertainty is high. In parallel, align internally on who will act as product owner, who will manage the vendor day to day, and how success will be measured.