We put excellence, value and quality above all - and it shows

A Technology Partnership That Goes Beyond Code

“Arbisoft has been my most trusted technology partner for now over 15 years. Arbisoft has very unique methods of recruiting and training, and the results demonstrate that. They have great teams, great positive attitudes and great communication.”

How to Review a Custom Software Vendor’s Case Studies: Signal vs Noise

Case studies matter because they often shape which vendors make your shortlist. They also mislead buyers when polished storytelling stands in for delivery evidence.

A public case study can show that a custom software vendor has worked in a certain environment and can explain a business problem coherently. It cannot, on its own, prove repeatable delivery quality, governance maturity, or fit for your constraints. Treat it as a starting artifact.

Why custom software vendor case studies matter

Most buyer teams want proof that a vendor has solved similar problems before. That makes case studies influential early, especially when multiple vendors look competent on the surface.

The problem is that case studies are marketing assets. They naturally highlight wins, compress trade-offs, and leave out details that matter most in buyer-side due diligence. A strong story can still hide unclear scope, weak attribution, or a client environment that looks nothing like yours.

That is where teams get into trouble. They over-weight the logo, the outcome metric, or the polish of the page, then under-weight the operational questions that usually decide project success. A better approach is simple: first decide whether the case is relevant, then decide whether the evidence is credible, then decide what follow-up proof you need.

Start with relevance before results

Read the case study as if the metrics do not exist yet. Your first job is to decide whether the client, problem, and delivery environment resemble your own closely enough for the story to matter.

Match the client and problem shape to your own environment

Do not anchor on industry alone. A healthcare case can still be weak evidence for you if it involved a low-risk internal tool while your project is a business-critical platform with uptime, compliance, and cross-team dependencies.

What usually matters more is problem shape:

- business criticality

- regulatory or security exposure

- stakeholder complexity

- data and integration complexity

- internal team maturity

- risk if the project slips or fails

A vendor that has handled similar constraints in another sector may be more relevant than a same-industry vendor with a shallow project. For example, a team that stabilized a legacy insurer platform under strict governance may be more comparable to your environment than a glossy healthcare redesign with no architecture or operating detail.

Check whether the delivery scope is truly comparable

Many case studies blur the line between end-to-end delivery and narrow contribution. That distinction changes what the story can legitimately prove.

A case about team augmentation can show that the vendor supplied strong engineers who worked well inside a client process. It does not prove the vendor can run discovery, own architecture decisions, manage risk, and deliver a full product build. The same is true for advisory or integration work.

Look for explicit scope markers such as discovery, design, development, testing, deployment, support, staffing model, and decision rights. If those are absent, ask. If the vendor mainly tuned APIs or supported a migration phase, do not treat that as proof of full-program leadership.

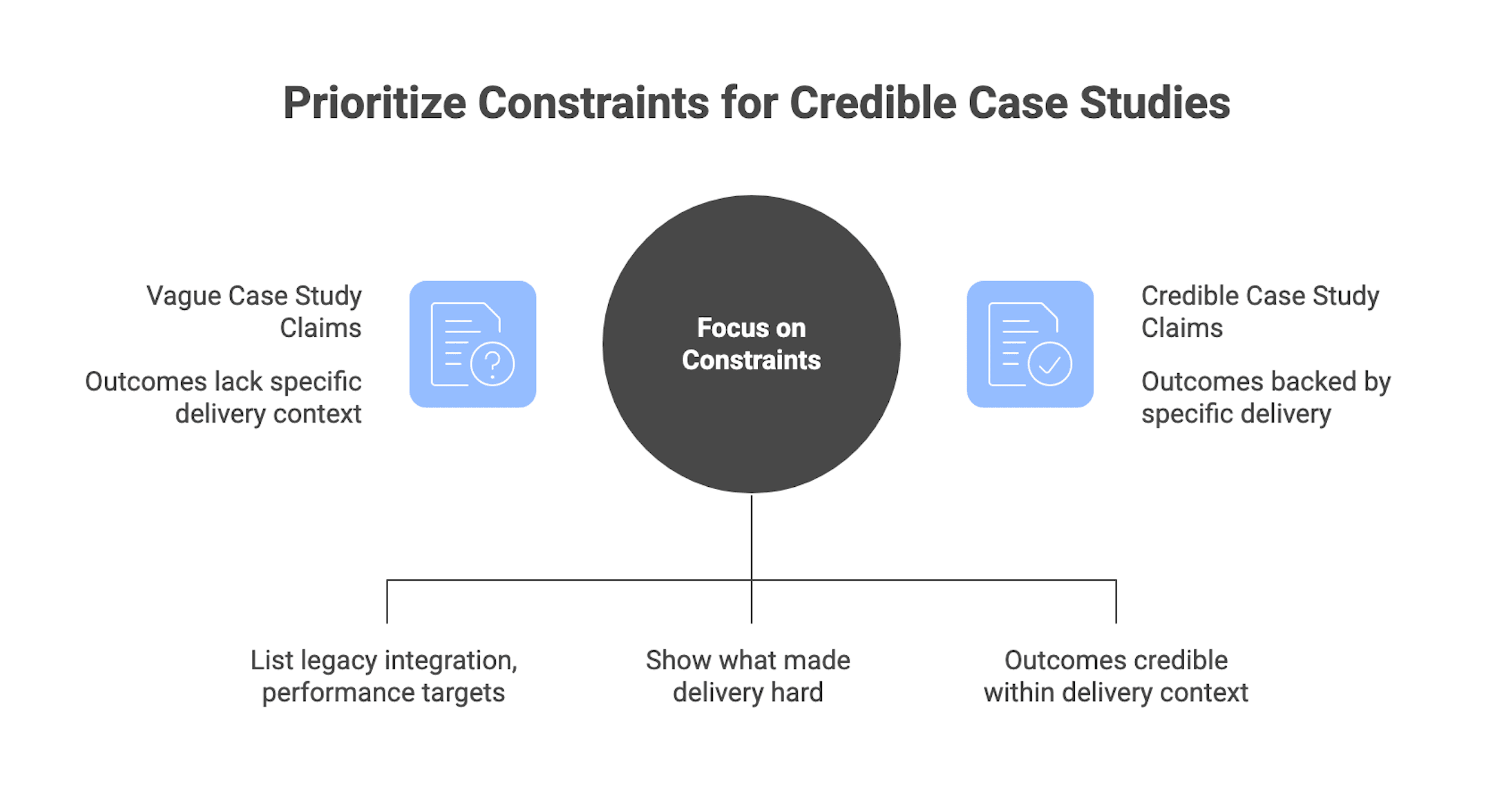

Look for constraints

Good case studies mention the conditions that made delivery difficult. That is what makes outcomes believable.

Useful constraints include legacy integration, performance targets, data migration issues, staffing limits, fixed deadlines, regulatory controls, and cross-team dependencies. When a vendor says they improved release speed, the more important question is what they were working around while doing it.

If a story only gives you outcomes and adjectives, your confidence should stay low. “Seamless migration” says little. “Migration completed while maintaining uptime, preserving data residency requirements, and coordinating with multiple internal teams” says far more.

A broader buyer’s guide can help frame where case-study review fits inside the full vendor selection process, but this page stays focused on proof evaluation.

What strong case-study evidence actually looks like

Once relevance is established, shift to evidence quality. Strong case studies do not need to reveal everything. They do need to reveal enough for a serious team to assess fit and credibility.

Here is a compact signal versus noise view you can apply quickly.

Element | Strong signal | Weak signal | What to ask next |

| Context | Clear client profile, problem, and constraints | Generic industry label and vague challenge | What environment was this actually in? |

| Scope | Delivery phases, ownership, and role clarity | No clear boundary between vendor and client work | What did you own versus support? |

| Outcomes | Baseline, timeframe, and plausible attribution | Big percentages with no context | How was this measured and over what period? |

Use the table as a filter. If a case is weak on two or three columns, it should not carry much weight until the vendor fills the gaps.

Signs the vendor is showing real work

Real delivery leaves traces. Even executive-level case studies should usually hint at decisions, methods, and trade-offs.

Look for details such as architecture choices, rollout phases, change-management steps, integration patterns, and governance mechanisms. A sentence about phased rollout, canary releases, or handoff boundaries tells you more than a paragraph about transformation.

Role clarity matters just as much. Who owned product decisions? Who approved releases? Who handled testing, security, and support? If the vendor cannot answer those questions clearly, you are not looking at strong proof.

What outcome claims should include to be believable

A good outcome claim has five parts:

- a baseline

- a timeframe

- a measurement method or source

- an owner of the metric

- a plausible attribution story

Without those elements, metrics are easy to admire and hard to trust. “Delivery improved by 3x” is weak. “Lead time fell from 45 days to 15 days over six months after a phased CI/CD rollout and governance change” is decision-useful.

Also separate persuasive metrics from vanity metrics. For custom software delivery, operational metrics, business process metrics, and adoption metrics usually tell you more than page views or generic engagement language.

How much transparency is enough

Confidentiality is real. A vendor may not be able to name the client or disclose exact numbers publicly.

That does not excuse empty storytelling. A credible vendor can still describe a top-five national bank, a regional healthcare provider, or a multi-region commerce platform in anonymized terms. They can usually share scope, constraint types, governance structure, and directional outcomes without exposing the client.

The line to watch is this: inability to share identifying details is normal; unwillingness to discuss methods, scope, or governance is not. If “client sensitivity” blocks every meaningful follow-up, treat the case study as low-weight evidence.

This is also where buyers should handle an edge case carefully. Some vendors, especially in regulated sectors, have weak or nonexistent public case studies but strong private evidence. In that situation, do not punish the lack of public marketing by itself. Shift the burden to private proof such as references, walkthroughs, redacted deliverables, and security documentation.

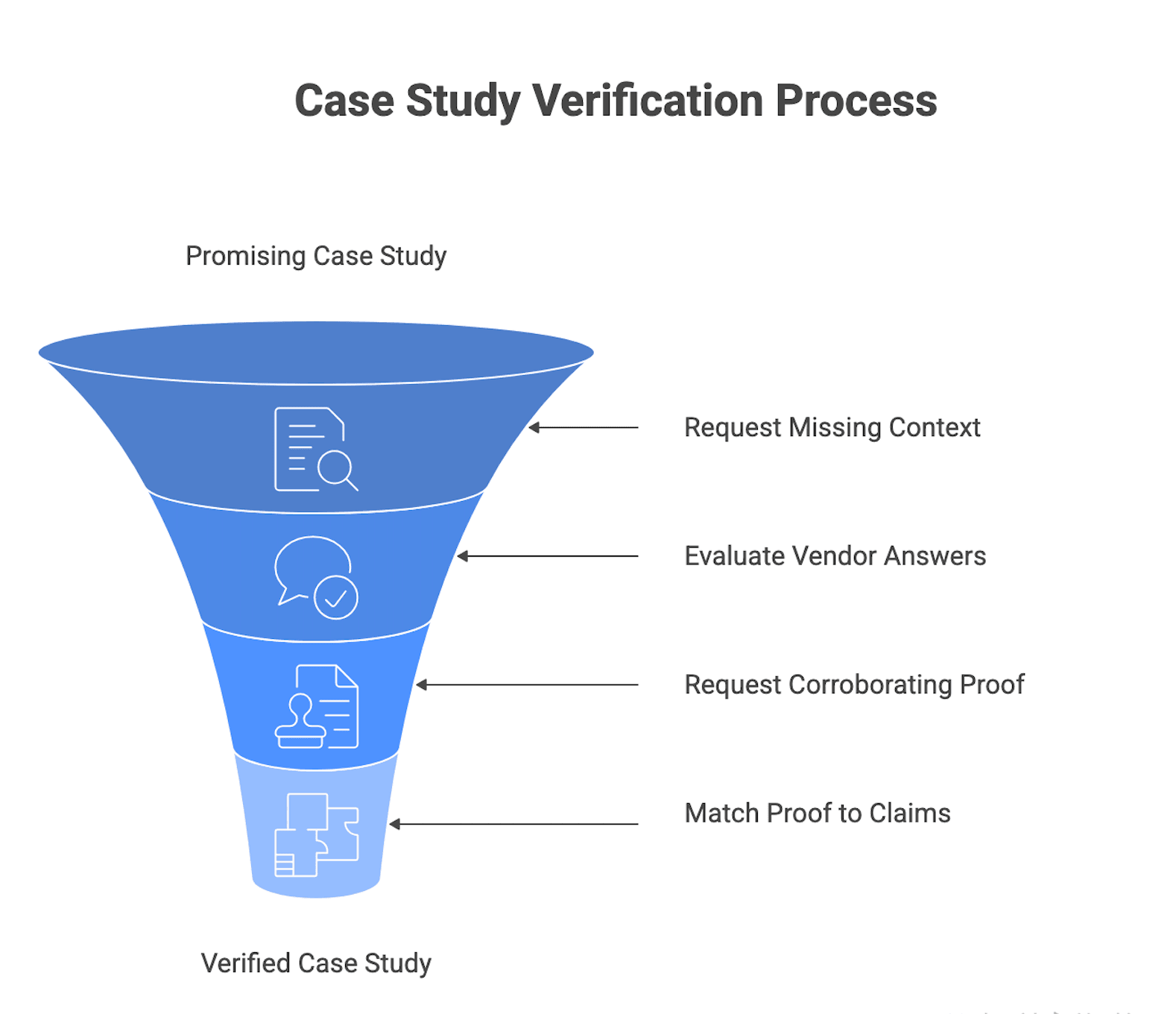

What to request when a case study looks promising

A promising case study should trigger verification. Your job is to convert what you think the story proves into a short list of tests.

Ask for the missing context behind the published story

Focus first on hidden context that changes comparability:

- What exactly did you own?

- What did the client own?

- Were other vendors involved?

- How stable was the team through the engagement?

- What commercial model shaped the work?

- Did scope, priorities, or timelines change?

Listen closely to how the vendor answers. Credible answers are specific and coherent. They acknowledge trade-offs, setbacks, or changing conditions. Deflective answers lean on vague language like “we collaborated closely” or “everything went smoothly.”

This is one of the fastest ways to separate a real delivery organization from a polished sales narrative.

Request corroborating proof where appropriate

Ask for proof that matches the claim you are testing. Do not ask for everything at once.

For promising case studies, the most useful follow-up artifacts are:

- client references

- architecture or solution walkthroughs

- sample deliverables such as backlogs, test plans, or runbooks

- security and quality documentation

- key team resumes

- demos or sandbox access where relevant

Match the request to the claim. If the vendor says they reduced incidents, ask for a redacted incident trend view and a release-process walkthrough. If they claim secure delivery in a sensitive environment, ask for secure development lifecycle material and relevant security documentation.

This is where a vendor evaluation scorecard can help organize evidence across finalists. Keep that page for scoring depth rather than turning this article into a full methodology.

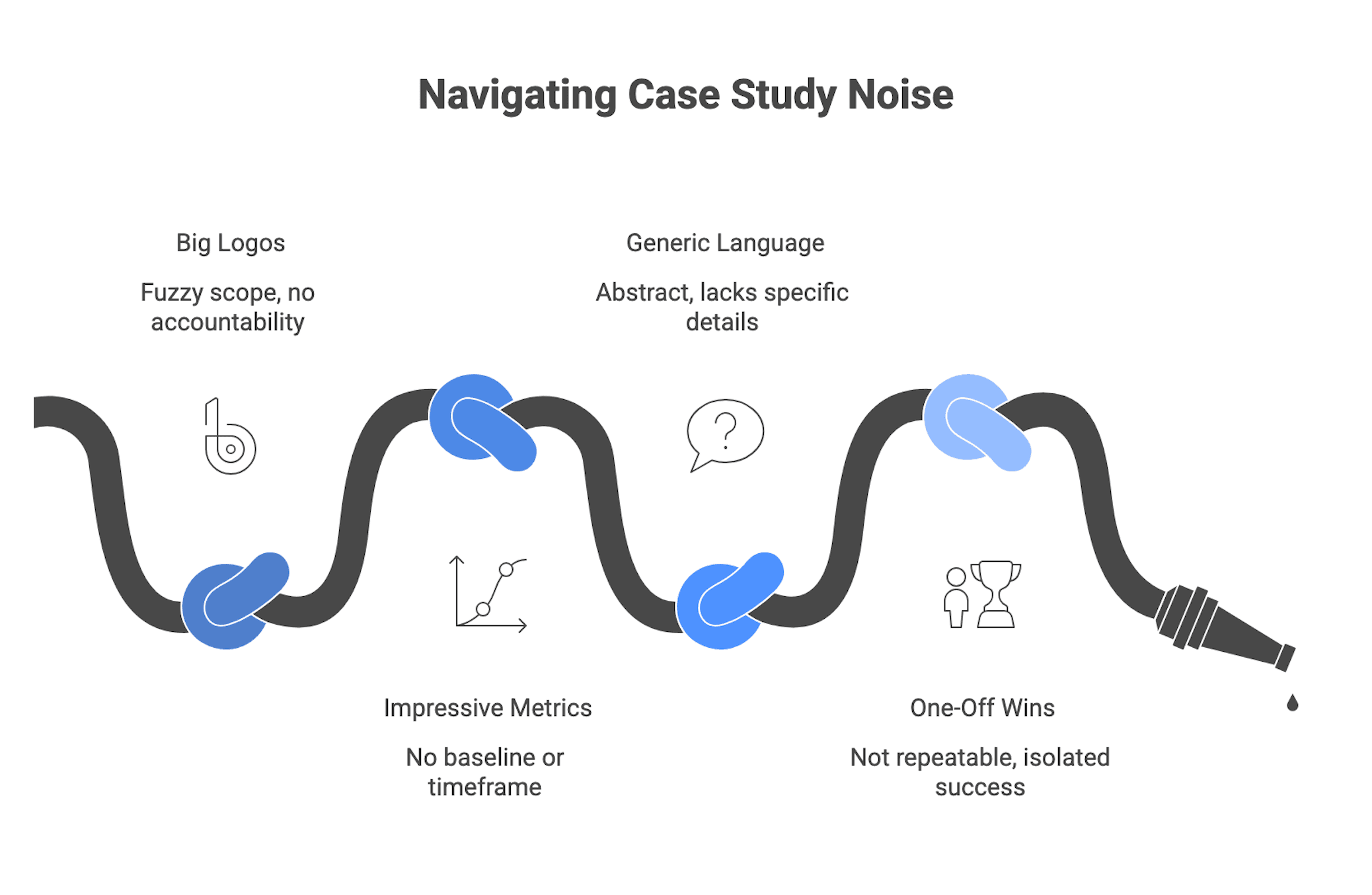

Common noise patterns and how to test them

Noise patterns are recurring ways case studies sound stronger than they are. The goal is to downgrade weak evidence quickly and move on to better proof.

Big logos with fuzzy scope

A famous client name may only show the vendor passed procurement and contributed something narrow.

Ask one blunt question: if the project had failed, would the vendor have been materially accountable? If not, the logo is a weak signal. Treat it as evidence of access.

Impressive metrics with no baseline or timeframe

Percentage improvements without context are one of the most common traps. No baseline means no meaning. No timeframe means no durability.

Ask: From what starting point? Over what period? Who measured it? What else changed during that time? If the answers stay vague, treat the metric as promotional language.

Generic transformation language that could describe almost any project

Many weak case studies could swap client names and still read the same. That is a sign the story is too abstract to help a buyer decide anything.

Run a simple abstraction test. Remove the client name and industry. If the story still fits almost any digital project, it is probably missing the system, process, and governance details you need.

One-off wins presented as repeatable capability

One strong result does not prove a repeatable operating model. It may reflect a unique client condition, a favorable team dynamic, or an isolated success.

Look for patterns across multiple case studies, references, and process descriptions. Repeated evidence of the same practices matters more than a single standout story.

Minimum acceptable baseline before a case study influences your shortlist

You do not need perfect proof before a case study becomes useful. You do need a minimum threshold.

At a practical level, a case study should show:

- a clear client profile and problem context

- defined engagement type and delivery scope

- some constraint detail

- outcomes with at least directional context

- enough role or process detail to understand the vendor’s contribution

If a case misses most of that, it is still marketing. You can keep the vendor in play, but only behind stronger proof requests.

Lower-risk engagements

For smaller or lower-risk work, your threshold can be lighter. A good contextual match on the technical problem, clear scope, one reference, and a brief process walkthrough may be enough.

The key is proportionality. Do not build a heavyweight diligence process for a contained dashboard, prototype, or non-critical integration. Stay disciplined, but keep the evidence burden reasonable.

Higher-risk or business-critical engagements

For core systems, regulated data, or high-availability services, the threshold rises sharply. One public case study should not move a vendor to finalist status by itself.

Expect multiple relevant case studies, deeper role clarity, stronger metric context, and corroborating artifacts such as security documentation, architecture walkthroughs, and references from comparable environments. If the vendor cannot provide that depth, either downgrade them for this opportunity or constrain the initial remit.

Turn case-study review into a better shortlist decision

The right output of case-study review is not a feeling. It is a clearer shortlist and a better next question.

Use a simple flow. First, classify each case as highly comparable, directionally relevant, or mostly superficial. Second, separate high-signal evidence from marketing-heavy noise. Third, design a few artifact requests aimed at the biggest remaining assumptions. Fourth, update shortlist confidence based on the quality of the vendor’s answers.

That keeps case studies in their proper role. They help you form hypotheses about capability, then test those hypotheses through due diligence.

When you are ready to move from proof review into selection mechanics, use the shortlisting framework for process depth, and keep the broader cluster connected through the vendor selection hub.

In the end, the key lens is simple: do not ask whether a case study is impressive. Ask whether it gives you decision-relevant evidence about relevance, scope, constraints, and outcomes. If it does, turn it into targeted follow-up requests. If it does not, keep it in the marketing pile and move on.