We put excellence, value and quality above all - and it shows

A Technology Partnership That Goes Beyond Code

“Arbisoft has been my most trusted technology partner for now over 15 years. Arbisoft has very unique methods of recruiting and training, and the results demonstrate that. They have great teams, great positive attitudes and great communication.”

Generative AI Fundamentals: Understanding Generative Models

Imagine a world where machines don't just analyze data but create compelling narratives, generate lifelike images, and redefine what's possible. This is the promise of generative AI, a force revolutionizing industries and redefining human-computer interaction.

Generative AI is the artisan of the digital age, breathing creativity into the world of data. At its core are transformer models like GPT-3, a language model with a staggering 175 billion parameters. This virtual muse translates thoughts into eloquent prose, code, or even Shakespearean poetry.

Understanding generative models unlocks a treasure trove of possibilities, including advanced techniques such as transfer learning in generative AI. From healthcare to the creative arts, generative AI enhances diverse fields. Yet, the art of crafting prompts, known as Prompt Engineering, is the key to optimizing these models. A well-crafted prompt directs the symphony of algorithms, making the fusion of human touch and machine intelligence a reality.

This blog delves into the fundamentals of ‘what is generative AI’, exploring its significance, uncovering the power of transformer models, and emphasizing the art of prompting for optimized outcomes. In the realm of artificial intelligence, a revolution is brewing, driven by the transformative power of generative AI solutions. This groundbreaking technology is poised to reshape industries and unlock a world of possibilities we can only begin to imagine.

But what exactly is generative AI, and how does it work? Let’s take a closer look.

Generative AI: A Broader Overview

In the dynamic landscape of artificial intelligence, one term that resonates with endless possibilities is Generative AI. It's about crafting, creating, and imagining.

Let's embark on a journey to understand the essence of generative AI, its divergence from traditional machine learning, and the fascinating models driving this technological evolution.

Demystifying Generative AI

At its core, generative AI is a subset of machine learning, empowering systems to learn from existing data and utilize that knowledge to create entirely new content. It's not only about predicting future outcomes or classifying data - it's also about mimicking the creativity and ingenuity of the human mind.

Generative AI vs. Traditional Machine Learning

Understanding the key distinctions between generative AI and traditional machine learning is crucial to grasping the unique potential of each approach. In the realm of traditional machine learning, algorithms reigned supreme as decision-making architects. They were adept at learning patterns from data, enabling them to make predictions or classifications based on those discovered patterns. However, Generative AI pushes beyond this realm, venturing into the domain of creation. Its focus transcends mere pattern recognition - it delves deep into understanding those patterns well enough to generate entirely new forms of data.

Versatility: Traditional vs. Generative Approach

One key differentiator lies in the versatility of each approach. Most traditional machine learning techniques are akin to specialists, meticulously trained for specific tasks. A sentiment analysis model, for instance, excels at predicting the emotional tone of the text, but only within the confines of the predefined sentiment classes. Many businesses employ sentiment analysis solutions to unlock deeper customer insights and tailor their strategies more effectively. In contrast, generative AI models, like Large Language Models (LLMs), resemble Swiss army knives, equipped with a diverse arsenal of creative abilities. An LLM, presented with a new prompt, can seamlessly switch gears from sentiment analysis to general language generation, effortlessly adapting to the task at hand.

However, it's important to acknowledge that the line between traditional ML and Generative AI isn't always clearly defined. Some traditional ML techniques can also generate data, such as super-resolution image models or language models trained for specific tasks like text summarization. The key difference lies in the approach and potential application. Traditional methods are often tailored to specific tasks, making them highly accurate within their defined scope. Generative AI, on the other hand, adopts a more open-ended approach, prioritizing the ability to create diverse and potentially novel forms of data, even if it comes at the cost of some accuracy sacrifice in specific cases.

Ultimately, both traditional machine learning and generative AI represent powerful tools with distinct strengths and weaknesses. Choosing the right approach depends on the specific problem you're trying to solve. If you require highly accurate predictions within a well-defined context, traditional methods might be your best bet. But suppose you seek to unlock creative potential and explore uncharted territories. In that case, generative AI offers a world of possibilities waiting to be unleashed and hyper-personalization is the next big leap.

Exploring the Types of Generative AI Models: GANs and VAEs

The world of generative AI encompasses a diverse range of models, each with its own unique capabilities. Two prominent generative AI model examples are Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs).

a. Generative Adversarial Networks (GANs):

GANs operate on the premise of competition, featuring two neural networks – a generator and a discriminator – locked in a creative duel. The generator creates content, and the discriminator evaluates its authenticity. This back-and-forth battle hones the generator's ability to produce content that is increasingly indistinguishable from real data.

For example, DeepArt is a GAN-powered tool that generates artwork in the style of famous artists. For a similar viral case study, read our blog on Ghibli AI.

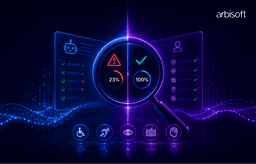

GANs are the artistic powerhouses of AI, capable of generating incredibly realistic and creative content. But how do they work? This infographic dives into the two sides of a GAN: the Generator, a visionary artist, and the Discriminator, a watchful critic. Witness their collaboration as they push each other to create ever-more convincing content, blurring the lines between reality and artificial ingenuity.

b. Variational Autoencoders (VAEs):

VAEs take a different approach by introducing a probabilistic element to the generation process. The model learns a probabilistic distribution of the input data and uses it to generate new, similar data points. This introduces a level of randomness, fostering creativity in the generated outputs.

Google's Magenta project uses VAEs to generate music compositions with a unique blend of style and randomness.

Transformer Models: The Backbone of Generative AI

Speaking of generative AI, transformer models are not just algorithms - they are the virtuosos of natural language processing (NLP), reshaping the way machines understand and generate human-like text.

Understanding Transformer Models

Transformer models are the brilliant conductors directing the symphony of generative AI. They don't rely on sequential processing. Instead, they employ a parallelized mechanism that processes all parts of a sequence simultaneously. This not only accelerates computation but also allows the model to capture complex dependencies in data with unparalleled finesse.

Architectural Brilliance

At the heart of transformer models lies the attention mechanism in language models, a revolutionary concept in the realm of NLP. This mechanism enables the model to focus on different parts of the input sequence with varying degrees of attention. In essence, it mimics the human ability to prioritize information, making transformer models exceptionally adept at understanding context and generating coherent responses.

Functionality

Transformer models consist of an encoder and a decoder, each comprising multiple layers. The encoder processes the input sequence, while the decoder generates the output sequence. The self-attention mechanism within these layers ensures that each word in the sequence contributes contextually to the generation process. This not only enhances the model's understanding but also empowers it to generate more nuanced and coherent responses.

Applicability

1. GPT-3 (Generative Pre-trained Transformer 3)

GPT-3 is a behemoth, boasting a colossal 175 billion parameters. This immense scale allows it to comprehend and generate human-like text across a myriad of contexts. The versatility of GPT-3 is astounding. Its capabilities span a broad spectrum of tasks, from writing essays to composing poetry and even generating code snippets.

2. LaMDA (Language Model for Dialogue Applications)

LaMDA, developed by Google, is designed specifically for natural language conversations. Its unique capability lies in engaging in open-ended, free-flowing dialogues, making it an ideal choice for interactive conversational AI.

3. LLaMA (Large Language Model Meta AI)

Meta's contender, LLaMA (Large Language Model Meta AI), comes in multiple sizes (7B to 65B parameters). Despite being smaller than GPT‑3, it outperforms it on many benchmarks, showcasing its research potential and accessibility, highlighting the small AI models and tools making a big splash in today’s AI landscape. Additionally, LLaMA incorporates 20 languages, expanding its linguistic grasp.

4. GPT-4 (Generative Pre-trained Transformer 4)

OpenAI's latest offering, GPT-4, takes the language model game a step further with 1.5T parameters. It boasts multimodal capabilities, meaning it can process and generate text alongside images, offering deeper context understanding and interpretation. While not yet at the human level, GPT-4 showcases significant progress in understanding complex inputs.

These are just some of the prominent language models making waves in the AI landscape. Each has its unique strengths and weaknesses, making them suitable for different applications. As further research and development unfold, we can expect even more advanced and nuanced language models to emerge, pushing the boundaries of human-computer interaction.

The Art of Prompting: Guiding Generative Models

Getting algorithms to transform data into creative expressions involves mastering an art - the art of prompting. It's not just about instructing machines - it's about delicately guiding them to produce the outputs that you’re looking for.

The Power of Well-Crafted Prompts

A well-crafted prompt is the linchpin that determines the output from generative models. It serves as the guiding force, steering the model toward producing content that aligns with specific objectives. Whether it’s generating text, images, or code, the precision and clarity in a prompt are pivotal in producing the desired response from the model.

Role of Prompts in Guiding Model Output

Consider a generative model as a versatile artist, ready to create upon receiving the right cues. The prompt acts as the creative brief, setting the tone, style, and context for the output. It's the difference between a vague instruction and a clear, nuanced directive. A well-crafted prompt inspires the model to infuse its outputs with a human-like touch, aligning them with the intricacies of the given task.

For more insights on crafting effective prompts to unleash the full potential of generative models, read this blog post on this very topic.

Generative AI: Embracing Magic

Generative AI is a true maestro - infusing its creative touch across diverse industries. Let's explore its real-world applications, where the ability to create, discover, and synthesize is reshaping industries and transforming our interaction with technology.

1. Content Creation

Generative AI serves as a muse for content creators, from crafting poetry and generating code to scripting narratives and composing music. Models like OpenAI's GPT-3 showcase unparalleled versatility, exhibiting creativity in Shakespearean sonnets and Python code alike.

For instance, GPT-3's creative capabilities span a wide spectrum, illustrating its prowess in diverse content formats.

2. Drug Discovery Breakthroughs

In healthcare, generative AI accelerates drug discovery by designing new compounds with improved properties. Models like Atomwise analyze molecular structures, predicting potential drug candidates and revolutionizing the research and development process. Atomwise's use of generative AI significantly reduces the time and resources traditionally required for drug discovery.

3. Image and Video Synthesis

Generative AI, exemplified by models like NVIDIA's StyleGAN, is the artist behind realistic image and video synthesis. From transforming photos into artworks to creating lifelike scenes in videos, this technology paints realism digitally.

Another example is DeepArt's use of generative models that transform photos into artworks inspired by renowned artists, showcasing the aesthetic potential of generative AI.

4. AI Assistants: Learning to Talk, Not Replace Humans

Powered by generative AI, assistants are becoming more intuitive and engaging companions. They understand context, respond naturally, and even hold open-ended conversations (think Google's LaMDA). While not human replacements yet (emotions and irony are still tricky!), they're evolving friends, not just scripted helpers.

The perfect example here is Google's LaMDA language model, which enables conversational AI applications, allowing virtual assistants to engage in open-ended and free-flowing dialogues, bringing a new level of natural interaction to users.

Ethical Considerations and the Future of Generative AI

The emergence of generative AI introduces not just opportunities but a host of ethical considerations that demand our attention. As we venture into this landscape, we must address the nuanced challenges, acknowledge the ethical implications, and envision a future where generative AI becomes a force for positive change.

1. Bias

Challenge: Generative AI models, trained on vast datasets reflective of societal biases, may perpetuate and amplify existing prejudices.

Language models exhibit gender or racial bias in their outputs, reflecting the biases present in the training data, thus demonstrating the impact of biases on LLM outputs.

2. Privacy

Challenge: Generative AI's capability to synthesize realistic content raises concerns about the potential misuse of personal information.

Deepfake technology leverages generative AI to create convincingly realistic videos of individuals, posing risks to personal privacy.

3. Misinformation

Challenge: The ease with which generative AI can generate content may contribute to the spread of misinformation and deep fake narratives.

Instances of AI-generated text or videos being manipulated for deceptive purposes, challenging the authenticity of the information.

Generative AI: A Creative Canvas, Not a Finish Line

Generative AI examples span a spectrum of complexity and application, showcasing the technology's versatility and advancement. In the realm of image generation, state-of-the-art Generative Adversarial Networks (GANs) can create hyper-realistic images, such as faces and landscapes, indistinguishable from real photographs. In natural language processing, models like GPT-3 are capable of generating coherent, context-aware text, demonstrating an understanding of language structure and semantics. In drug discovery, generative AI can predict molecular structures that have potential therapeutic effects, accelerating the process of drug development. In the field of robotics, generative AI is used to simulate complex environments and scenarios, enabling robots to learn and adapt to real-world challenges.

These generative AI examples underscore the transformative impact of generative AI across scientific, industrial, and technological domains.

We've seen the magic of GPT-3's poetry and StyleGAN's artistry. Generative AI isn't just technology, it's a co-creator, blurring the lines between human and machine. But this power demands responsibility—bias, privacy, and misinformation loom, demanding ethical innovation.

But the journey isn't over. Let's explore responsibly, refine our understanding, and push boundaries. With ethics as our beacon, we can envision a future where AI fuels human expression. The canvas is blank, and the algorithms await. Let's create, together.